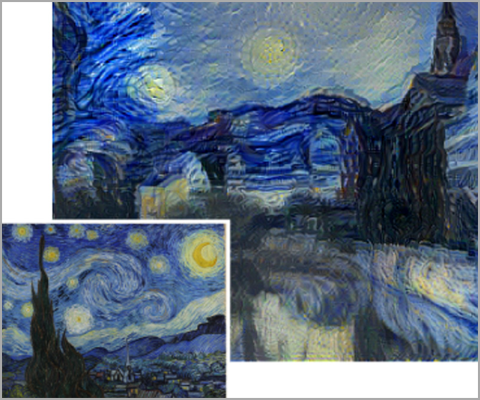

MIT researchers just released a new GPU-accelerated algorithm that can predict how memorable or forgettable an image is almost as accurately as humans — and they plan to turn it into an app that subtly tweaks photos to make them more memorable.

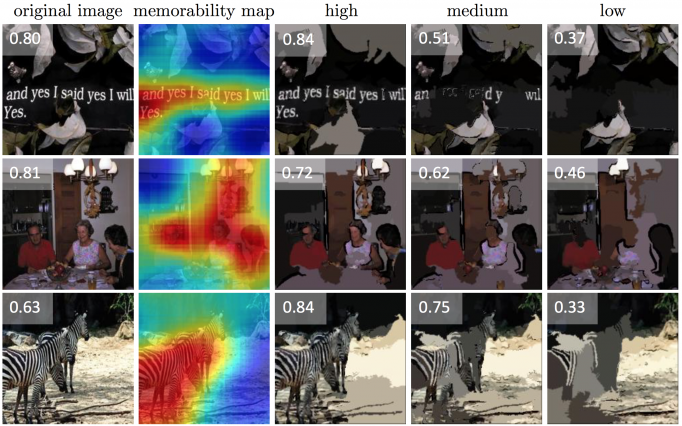

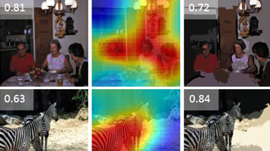

Using a TITAN X GPU and deep learning, the “MemNet” algorithm creates a heat map that identifies exactly which parts of the image are most memorable. Their fine-tuned convolutional neural network was able to reach a rank correlation of 0.64, approaching human consistency which is 0.68.

The team members envision a variety of potential applications, from improving the marketing value of ads and social media posts, to developing more effective teaching resources, to creating your own personal “health-assistant” device to help you remember things.

Part of the project the team has also published the world’s largest image-memorability dataset, LaMem. With 60,000 images, each annotated with detailed metadata about qualities such as popularity and emotional impact, LaMem is the team’s effort to spur further research on what they say has often been an under-studied topic in computer vision.

“This sort of research gives us a better understanding of the visual information that people pay attention to,” says Alexei Efros, an associate professor of computer science at the University of California at Berkeley. “For marketers, movie-makers and other content creators, being able to model your mental state as you look at something is an exciting new direction to explore.”

Read more >>

Predicting Photos’ Memorability at “Near-Human” Levels with Deep Learning

Jan 08, 2016

Discuss (0)

Related resources

- DLI course: Deep Learning for Industrial Inspection

- GTC session: Scaling Generative AI Features to Millions of Users Thanks to Inference Pipeline Optimizations

- GTC session: AI for Learning Photorealistic 3D Digital Humans from In-the-Wild Data

- GTC session: Generative AI Theater: Fast-Track AI Development With NVIDIA APIs and NGC Catalog

- NGC Containers: MATLAB

- Webinar: Isaac Developer Meetup #2 - Build AI-Powered Robots with NVIDIA Isaac Replicator and NVIDIA TAO