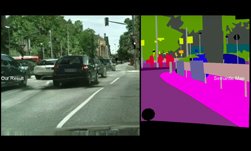

Pix2PixHD is a PyTorch implementation of a deep learning-based method for high-resolution (e.g. 2048×1024) photorealistic image-to-image translation. Today, NVIDIA is releasing the code on NGC for commercial use via the Berkeley Software Distribution (BSD) License. The BSD license will allow developers to use Pix2PixHD in their closed source commercial projects, as long as users accept and comply with the license agreement.

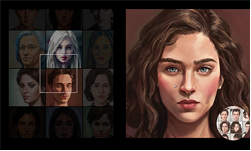

First released at CVPR in 2018, Pix2PixHD can be used for turning semantic label maps into photo-realistic images for synthesizing portraits from face label maps.

“Conditional GANs have enabled a variety of applications, but the results are often limited to low-resolution and still far from realistic. In this work, we generate 2048×1024 visually appealing results with a novel adversarial loss, as well as new multi-scale generator and discriminator architectures,” the researchers stated in their paper.

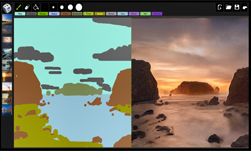

The two main contributions of the Pix2PixHD work are the use of instance-level semantic label maps and the ability to generate diverse results given the same input label. For example, instance-level semantic label maps contain a unique ID for each individual object in an image. “This enables flexible object manipulations, such as adding/removing objects and changing object types,” the researchers said. “With the instance map, separating these objects becomes an easier task.”

The neural network can also generate diverse results given the same input label map. “This allows the user to edit the objects, interactively. The image-to-image synthesis pipeline can be extended to produce diverse outputs, and enable interactive image manipulation given appropriate training input-output pairs. Without ever been told what a “texture” is, our model learns to stylize different objects, which may be generalized to other datasets as well.”