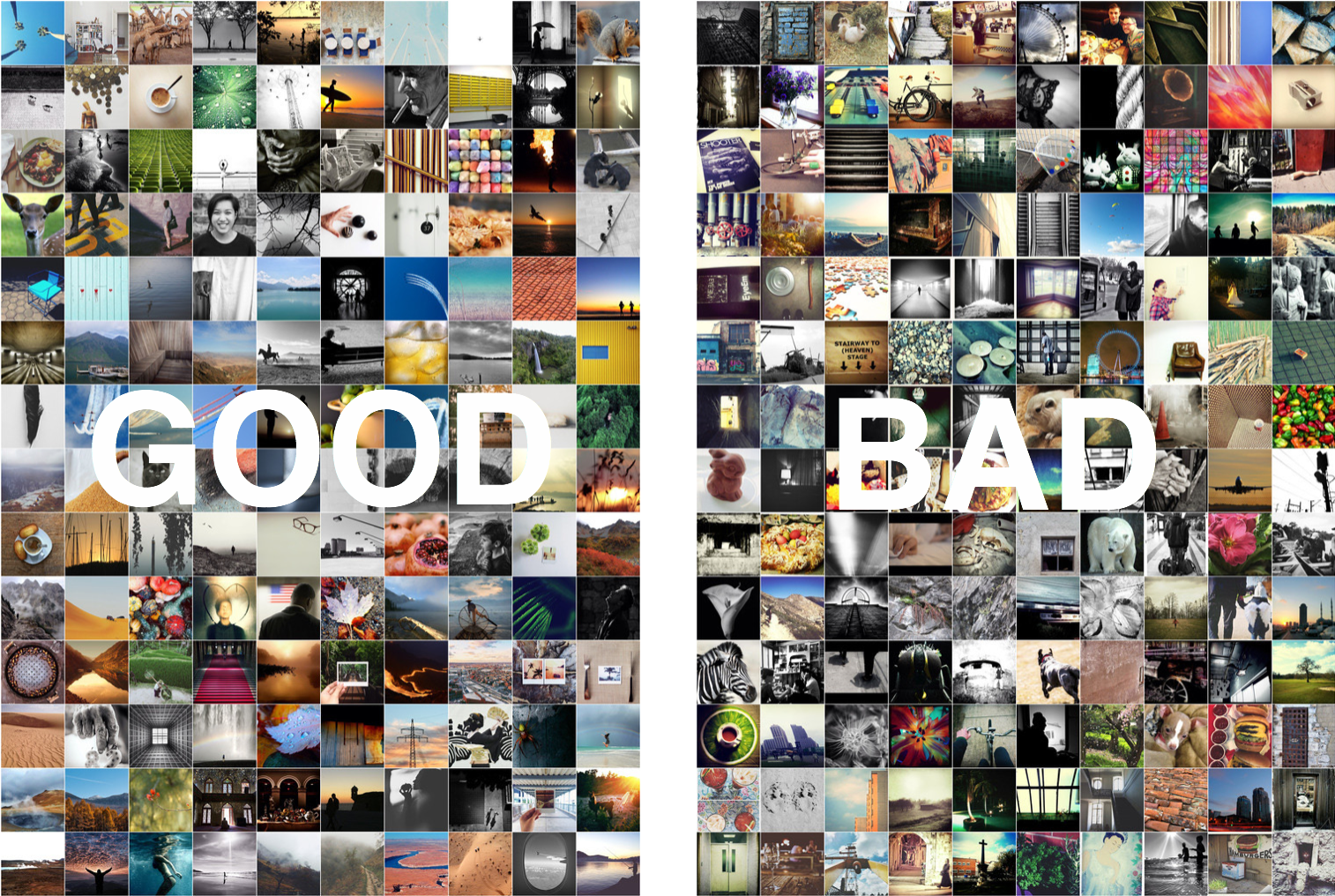

Most people in the world own a camera and take photographs—trillions of photos every year. An imminent problem is how to organize, search and re-use our photographs, and deep learning methods have already been successful in such tasks. But visual aesthetics are very personal, often subconscious, and hard to express. In a world with an overload of photographic content, a lot of time and effort is spent manually curating photographs, and it’s often hard to separate the good images from the visual noise.

The question put forward by EyeEm, an internet photography company with a global online community of photographers is: can a machine learn personalized aesthetics embodied in a set of chosen photos, and recreate them in a different set? Can deep learning be applied to aid photography curators?

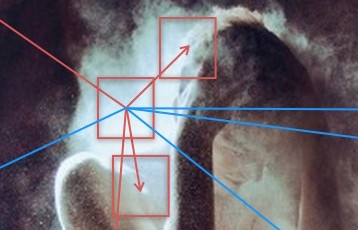

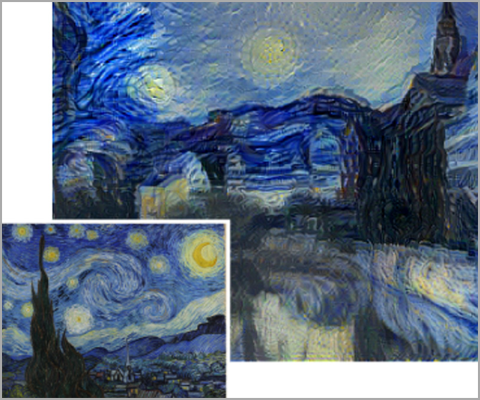

A new Parallel Forall blog post by Appu Shaji and Gökhan Yildirim from EyeEm explores that question and shows how EyeEm applies GPU-accelerated deep learning to translate the visual preferences of a curator into a machine learning model and a Personalized Aesthetics interactive tool—despite curators often not being able to translate their thoughts about photographs and aesthetics into language. (“I know it when I see it” is a classical sentiment among photographers and photo editors.)

The ability to rapidly train models is crucial to providing an interactive interface. It allows the curator to augment the model with additional images if he or she finds the model deficient in a particular facet, and correct any unwanted biases the model might have learned. EyeEm trains models using the Theano framework, leveraging CUDA 8.0 and the cuDNN 5.0 library. They can train new models in three to four seconds on average, and their Multi-Layer Perceptron network converges in about 100 iterations.

Read more >

Personalized Aesthetics: Recording the Visual Mind

Mar 29, 2017

Discuss (0)

Related resources

- DLI course: Building a Brain in 10 Minutes

- GTC session: Artists Exploring Creativity and Language With Generative AI

- GTC session: AI and the Data Universe: Refik Anadol’s Artwork for Global Impact

- GTC session: Generative AI Theater: Using Generative AI in Concept Development

- Webinar: Building Generative AI Applications for Enterprise Demands

- Webinar: Isaac Developer Meetup #2 - Build AI-Powered Robots with NVIDIA Isaac Replicator and NVIDIA TAO