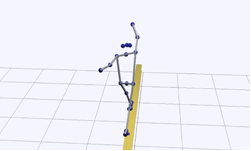

To improve how sporting events are covered in the news, a new AI 3D pose estimation model was recently developed by a group of researchers from The New York Times R&D group, and wrnch, an AI-computer vision company and member of the NVIDIA Inception program.

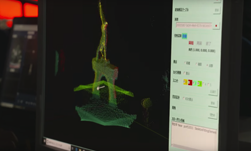

The 3D pose estimation model can help extract data the human eye can easily miss and help journalists tell a story with more concrete data.

“Traditional motion capture techniques require an athlete to wear physical markers. But this isn’t possible during live sporting events. Instead, we built a solution that uses our photographers’ cameras, machine learning and computer vision to capture this data as an event unfolds,” the researchers stated in their article, Estimating 3D Poses of Athletes at Live Sporting Events.

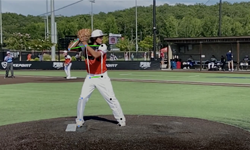

The researchers have thus far focused on gymnastics, a sport that is unique in how it’s scored and is one of the most popular Olympic sports.

To develop the model, the researchers conducted field tests at practice sessions of the Rutgers University women’s gymnastics team. There they fine-tuned their models with multiple videos and photos of the athletes at work. With fine-tuning and real-time data, the researchers were able to extract 3D poses and live metrics using just three cameras.

For training and inference, the team used multiple NVIDIA GPUs on the cloud and TensorRT, NVIDIA’s inference accelerator. TensorRT allowed them to run the pose estimation models with speed and accuracy.

The researchers also found that their models could easily be extended to other sports beyond gymnastics such as track and field and tennis.

“We’re exploring how this capability might be useful in future coverage, including at next summer’s Tokyo Olympics,” the researchers stated in their article. “Beyond sports, we’re excited about the potential of computer vision to help journalists responsibly gather new types of data to inform their reporting.”

The work builds on previous GPU-accelerated 3D pose estimation models developed by wrnch. The Canadian-based startup was founded by Dr. Paul Kruszewski in 2015 and has received funding from serial entrepreneur and owner of the Dallas Mavericks Mark Cuban.

Read the full article on The New York Times R&D Website.