Wired discusses Google’s announcement that it is open sourcing its TensorFlow machine learning system – noting the system uses GPUs to both train and run artificial intelligence services at the company.

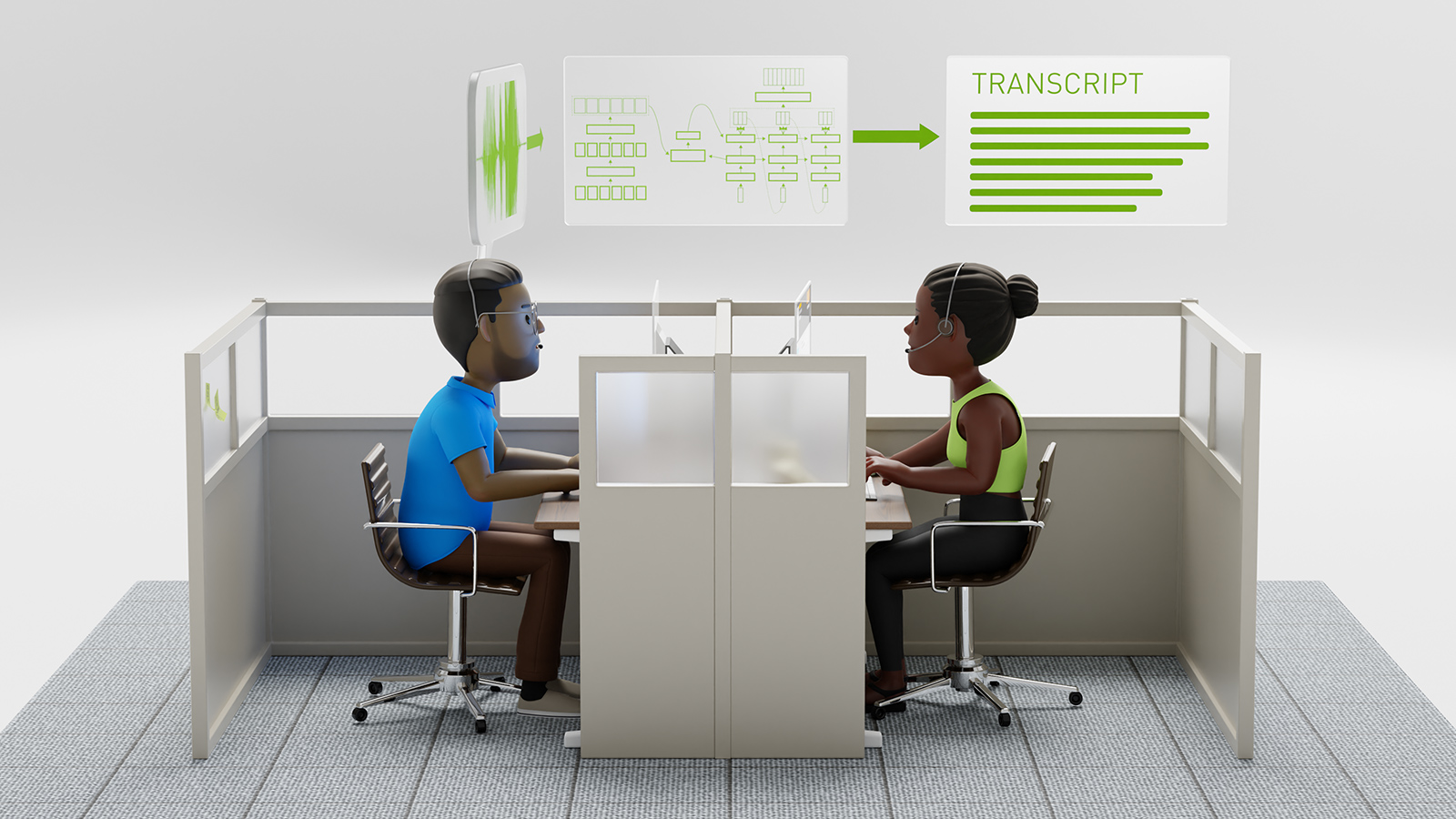

Inside Google, when tackling tasks like image recognition and speech recognition and language translation, TensorFlow depends on machines equipped with GPUs that were originally designed to render graphics for games and the like, but have also proven adept at other tasks. And it depends on these chips more than the larger tech universe realizes. See the whitepaper for details of TensorFlow’s programming model and implementation).

According to Jeff Dean, , who helps oversee the company’s AI work, Google uses GPUs not only in training its artificial intelligence services, but also in running these services—in delivering them to the smartphones held in the hands of consumers.

The article continues to mention that companies like Facebook, Microsoft, and Baidu, are taking advantage of NVIDIA GPUs for deep learning because they can process lots of little bits of data in parallel.

At Google, they use deep learning to not only identify photos, recognize spoken words, and translate from one language to another, but also to boost search results. And other companies are pushing the same technology into ad targeting, computer security, and even applications that understand natural language. And to do so, it will take a large amount of GPUs.

Read the entire article on Wired >>

NVIDIA to Benefit from Shift to GPU-powered Deep Learning

Nov 10, 2015

Discuss (0)

Related resources

- GTC session: Data Patterns for NVIDIA AI: NVIDIA DGX SuperPODs, NVIDIA DGX BasePOD, Analytics, and Deployment (Presented by IBM)

- GTC session: Accelerating the Shift to AI-Defined Vehicles

- GTC session: Accelerate Model Training on Car Accident Data

- NGC Containers: RIVA ASR NIM

- NGC Containers: GenAI SD NIM

- SDK: MONAI Cloud API