By watching a person make a mixed drink, a robot trained on NVIDIA GPUs at the University of Maryland will then copy those actions to pour the right quantities into your drink. The robot – a two-armed industrial machine – watched a person mix a drink by pouring liquid from several bottles into a jug, and would then copy those actions, grasping bottles in the correct order before pouring the right quantities into the jug.

“We call it a ‘robot training academy,’” says Yezhou Yang, a graduate student in the Autonomy, Robotics and Cognition Lab at the University of Maryland. “We ask an expert to show the robot a task, and let the robot figure out most parts of sequences of things it needs to do, and then fine-tune things to make it work.”

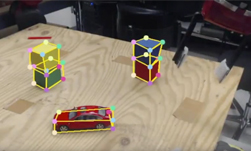

The approach involves training a computer system to associate specific robot actions with video footage showing people performing various tasks. A recent paper from the group, for example, shows that a robot can learn how to pick up different objects using two different systems by watching thousands of instructional YouTube videos. One system learns to recognize different objects; another identifies different types of grasp.

Two Tesla K40s and two TITAN GPUs were used for the cocktail-making application and then two additional TITAN GPUs for neural network training and scene reconstruction.

The researchers are talking to several manufacturing companies, including an electronics business and a carmaker, about adapting the technology for use in factories.

Robots Learning New Tasks from YouTube Videos

Oct 02, 2015

Discuss (0)

Related resources

- DLI course: Building Video AI Applications at the Edge on Jetson Nano

- GTC session: Training Robot Behavior at Scale in the AWS Cloud With the NVIDIA Isaac Robotics Platform

- GTC session: Using Omniverse to Generate First-Person Experiential Data for Humanoid Robots

- GTC session: Reward Fine-Tuning for Faster and More Accurate Unsupervised Object Discovery

- Webinar: Bringing Generative AI to Life with NVIDIA Jetson

- Webinar: Isaac Developer Meetup #2 - Build AI-Powered Robots with NVIDIA Isaac Replicator and NVIDIA TAO