Recently, NVIDIA CEO Jensen Huang announced NVIDIA Merlin, an end-to-end deep learning recommender framework, entered open beta during his GTC Keynote.

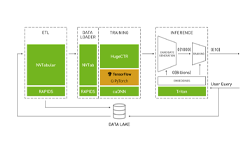

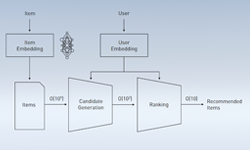

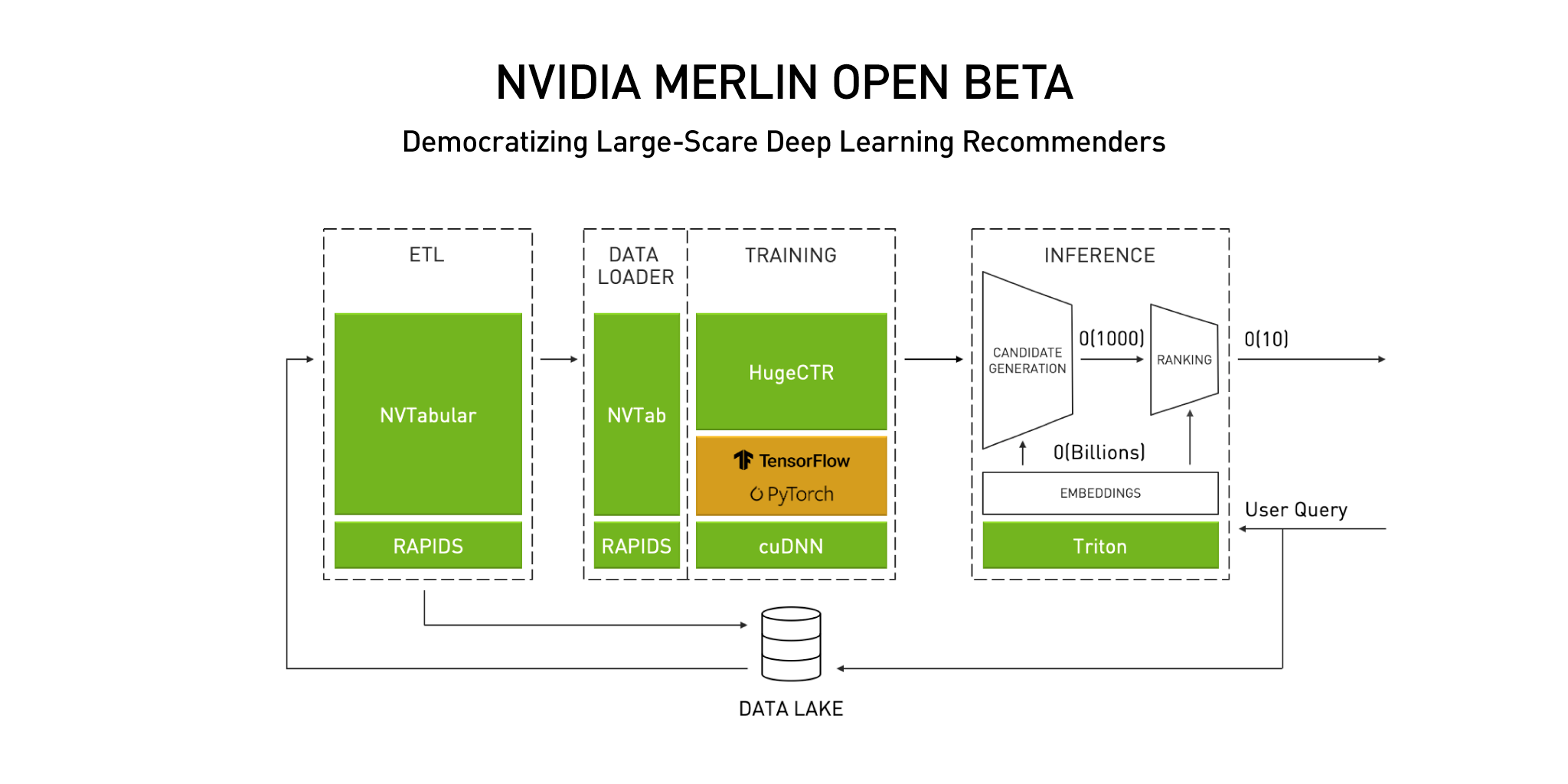

When data scientists and machine learning engineers seek to build and scale their recommenders, they often face challenges with feature engineering, preprocessing, training, and performance. Merlin is designed to address these challenges and enables data scientists and machine learning engineers to build effective recommenders with better prediction at scale. Merlin’s latest open beta update continues to show NVIDIA’s commitment to democratizing building deep learning recommenders and optimize workflows with interoperability and performance enhancements.

Merlin NVTabular

Merlin NVTabular is the feature engineering and preprocessing library designed to reduce preparation time when manipulating terabyte-sized (or larger) recommender datasets. Just a few of the performance enhancements within the open beta update include multi-node using DASK-cuDF, multi-hot categorical support, and a slew of improvements to its custom dataloaders.

These performance enhancements support machine learning engineers and data scientists complete common feature engineering and pre-processing steps within a recommender system. Also, fresh from the NVIDIA team’s ACM RecSys 2020 Challenge win, key operators used for the RecSys 2020 Challenge for processing tabular data are now integrated into NVTabular.

The combination of performance enhancements and new operators, help data scientists and machine learning engineers accelerate their ETL, feature engineering, dataloading, and preprocessing tasks.

Merlin HugeCTR

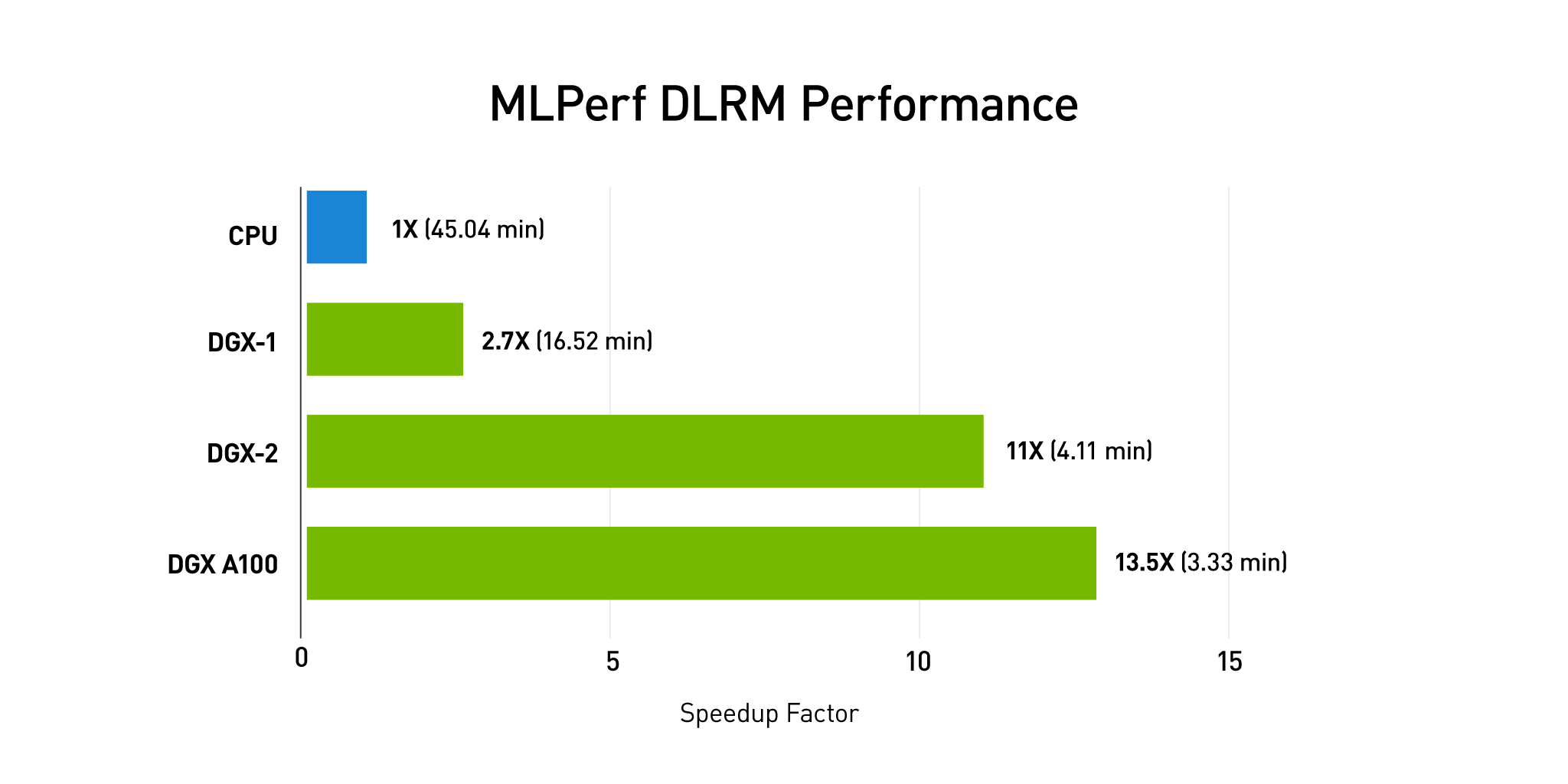

Merlin HugCTR is a deep neural network training framework designed specifically for recommenders. It is focused on recommender training, performance, and increasing click-through-rates. HugeCTR powers the fastest commercially available solution for recommender training.

In this release HugeCTR provides ease-of-use and interoperability updates with a Python API interface, TensorFlow integration for embeddings performance improvements via custom operators, and support for model oversubscribing which enables training terabyte-sized embeddings in a single node.

HugeCTR has also developed model parallel training across multiple GPUs and multiple nodes. This latest update reaffirms NVIDIA’s commitment to free up data scientists and machine learning engineers from having to think about how to efficiently distribute the memory and how to create the individual components. HugeCTR handles it for them. HugeCTR proves key capabilities that enable the acceleration of machine learning engineers and data scientists overall workflows.

Download and Try All Merlin Open Beta Components

As NVIDIA CEO Jensen Huang recently announced in his GTC keynote, NVIDIA is committed to democratizing the building of large scale deep learning recommenders, Merlin components are open source projects and available for download in multiple formats. For more information on Merlin and to download components of Merlin’s end-to-end deep learning recommender framework, visit the Merlin product home page.