At the International Conference on Computer Vision in Seoul, Korea, NVIDIA researchers, in collaboration with University of Toronto, the Vector Institute and MIT presented Meta-Sim, a deep learning model that can generate synthetic datasets with unlabeled real data (i.e. camera footage), bridging the gap between real and synthetic training data.

Meta-Sim is a method to generate synthetic datasets that bridge the distribution gap between real and synthetic data and are optimized for downstream task performance

This method provides a new and important aspect for use in industries such as healthcare where training data is scarce.

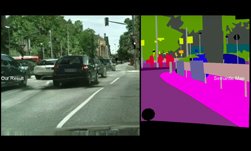

“From synthetic to real datasets, two kinds of domain gaps arise: the appearance (style) gap and the content (layout) gap,” the researchers stated. “Most existing work tackles the appearance gap, this work is an early attempt to tackle the second kind of domain gap – the content gap.”

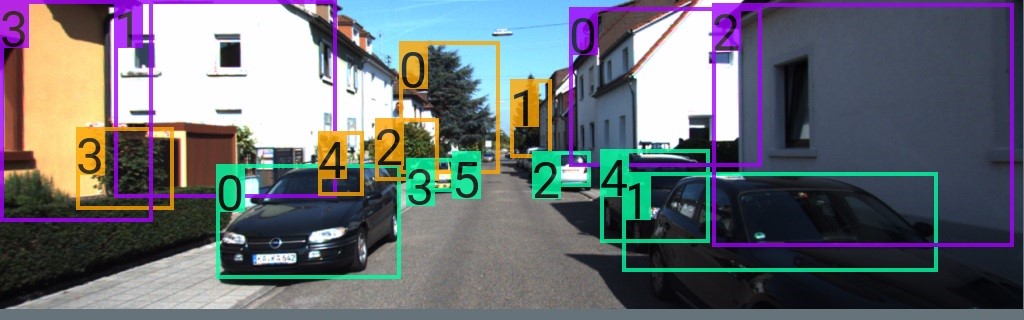

We show results of a network trained on a dataset produced by a baseline, versus a dataset produced by our method. Notice how the model trained on the dataset created by Meta-Sim leads to fewer false and missed detections.

To do this, Meta-Sim exploits procedural generation and learns to adapt the generated content according to unlabeled real data. If the real dataset comes with few labels, the team optimizes the data generation by maximizing performance on a downstream task.

For this work, the team used an NVIDIA TITAN RTX for rendering and an NVIDIA DGX-2 with 16 NVIDIA V100 GPUs for training the neural networks.

“Experiments show that the proposed method can greatly improve content generation quality over a human-engineered probabilistic scene grammar, both qualitatively and quantitatively as measured by performance on a downstream task,” the researchers stated.

Synthetic datasets have emerged as a promising solution to pre-train current neural network models, since ground truth annotations can be rendered using graphics engines for free.