The latest update to the NVIDIA Deep Learning SDK includes the NVIDIA TensorRT deep learning inference engine (formerly GIE) and the new NVIDIA Deep Stream SDK.

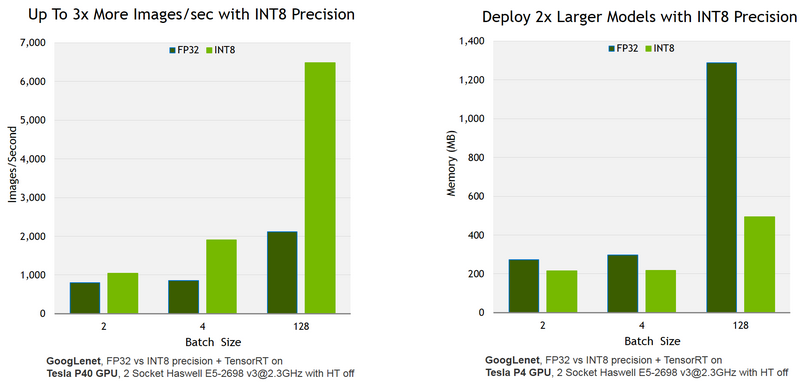

TensorRT delivers high performance inference for production deployment of deep learning applications. The latest release delivers up to 3x more throughput, using 61% less memory with new INT8 optimized precision.

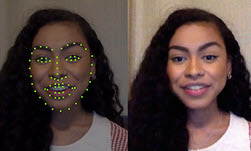

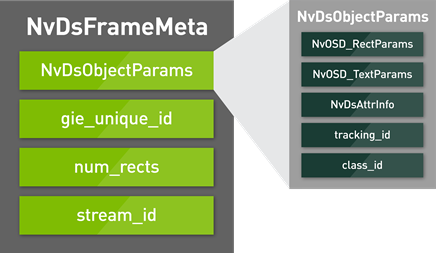

NVIDIA DeepStream SDK simplifies development of high performance video analytics applications powered by deep learning. Using a high-level C++ API and high performance runtime, developers can use the SDK to rapidly integrate advanced video inference capabilities including optimized precision and GPU-accelerated transcoding to deliver faster, more responsive AI-powered services such as real-time video categorization.

Learn more about the NVIDIA Deep Learning SDK >

New Update to the NVIDIA Deep Learning SDK Now Help Accelerate Inference

Sep 13, 2016

Discuss (0)

Related resources

- GTC session: AI/ML Speech Recognition/Inferencing: NVIDIA Riva on Red Hat OpenShift with PowerFlex

- NGC Containers: NVIDIA MLPerf Inference

- NGC Containers: NVIDIA MLPerf Inference

- SDK: Nsight Deep Learning Designer

- SDK: TensorRT

- Webinar: Accelerate AI Model Inference at Scale for Financial Services