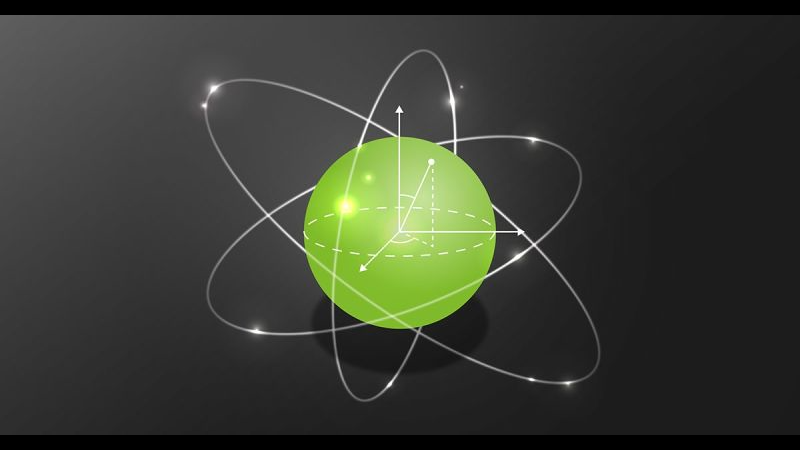

Physicists from more than a dozen institutions used the power of the GPU-accelerated Titan Supercomputer at Oak Ridge National Laboratory to calculate a subatomic-scale physics problem of measuring the lifetime of neutrons.

The study, published in Nature this week, achieves groundbreaking precision and provides the research community with new data that could aid in the search for dark matter and answer some of the mysteries of the universe.

“We now have a purely theoretical prediction of the lifetime of the neutron, and it is the first time we can predict the lifetime of the neutron to be consistent with experiments,” mentioned Chia Cheng, one of the lead authors of the study, and a postdoctoral researcher in Berkeley Lab’s Nuclear Science Division. “Past calculations were all performed amidst this more noisy environment.”

Equipped with 18,688 NVIDIA Tesla GPUs, the scientists used nearly 184 million hours of computing power on the Titan supercomputer – which would take a single laptop computer about 600,000 years to complete the same calculations, the researchers said.

“We used about a factor of 10 fewer samples than previous projects,” said André Walker-Loud, a staff scientist in Berkeley Lab’s Nuclear Science Division who led the new study. “The most computationally expensive calculations could only be performed on Titan, which enabled us to do our calculations about 100 times faster than we would have been able to do so otherwise.”

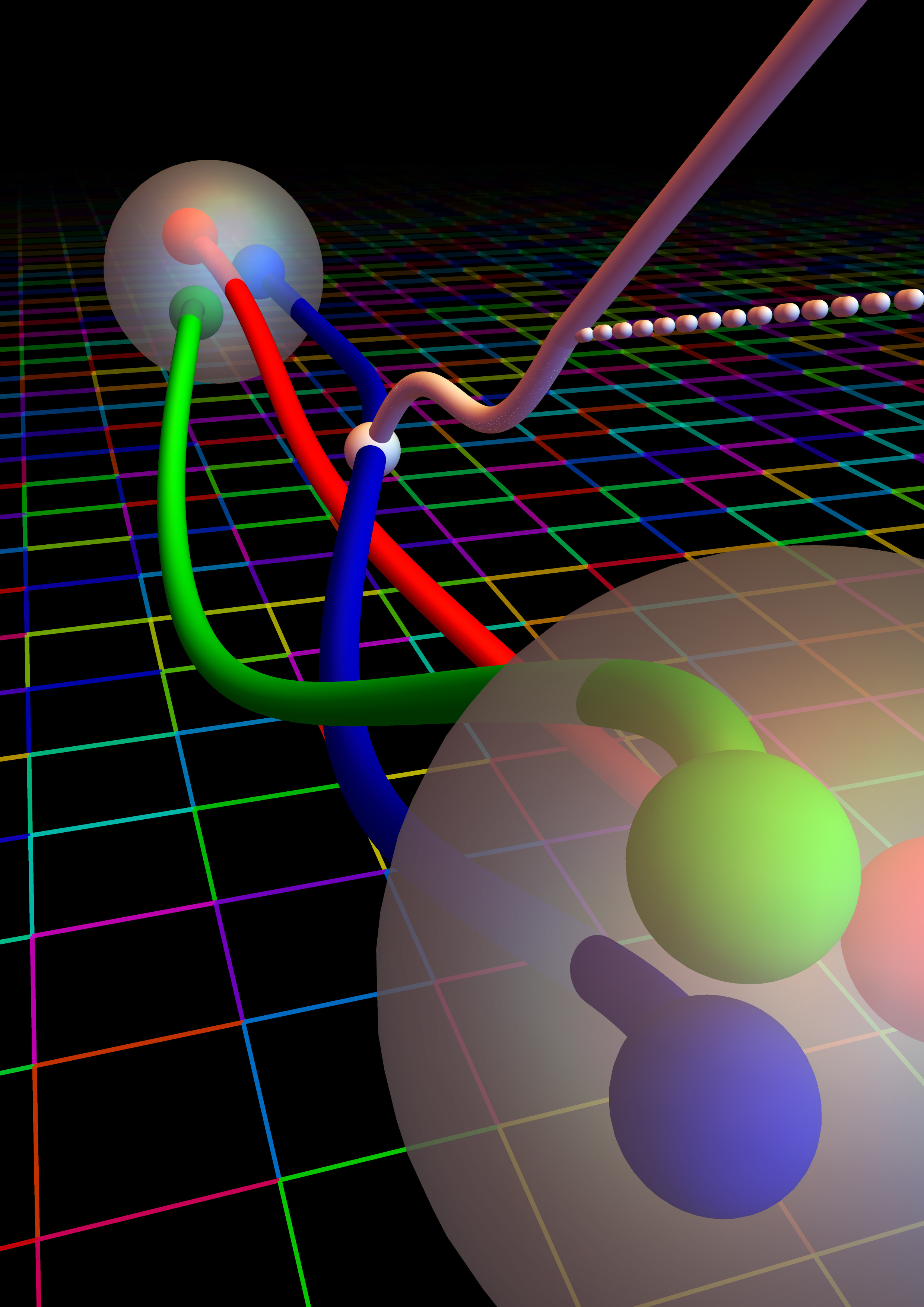

The success of the project also relied on publicly available QCD configurations (which allow researchers to model how particles move on the lattice) from the MIMD Lattice Computation (MILC) Collaboration; the lattice QCD code Chroma developed by USQCD; and QUDA, the lattice QCD library for NVIDIA GPU-accelerated compute nodes.

“Calculating gA was supposed to be one of the simple benchmark calculations that could be used to demonstrate that lattice QCD can be utilized for basic nuclear physics research, and for precision tests that look for new physics in nuclear physics backgrounds,” said Walker-Loud, “It turned out to be an exceptionally difficult quantity to determine.”

In the end, the team was able to reduce the uncertainty of their margin to just under 1 percent.

In future iterations, the team hopes to drive uncertainty margin to about 0.3 percent.

The research was supported by the DOE Office of Science, as well as the National Science Foundation.

Authors include researchers and staff from Lawrence Berkeley National Laboratory, University of California, Berkeley, University of North Carolina, Brookhaven National Laboratory, Lawrence Livermore National Laboratory, Forschungszentrum Jülich, University of Liverpool, College of William & Mary, Rutgers, The State University of New Jersey, University of Washington, University of Glasgow, NVIDIA Corporation, and Thomas Jefferson National Accelerator Facility.

Read more >

New Physics Research Aims to Answer the Mysteries of the Universe

May 31, 2018

Discuss (0)

Related resources

- GTC session: How to use NVIDIA Modulus Framework to Develop Physics ML Models

- GTC session: Ray-Tracing RF-Propagation Digital Twin Solution for 6G Applications using NVIDIA Omniverse

- GTC session: Navigating Virtual Worlds: Exploring Omniverse, Realistic Simulations, and Generative AI

- NGC Containers: MATLAB

- SDK: Falcor

- SDK: IndeX - Amazon Web Services