Current style transfer models are large and require substantial computing resources to achieve the desired results. To accelerate the work and make style transfer a tool that is more widely adopted, researchers from NVIDIA and the University of California, Merced developed a new deep learning-based algorithm for style transfer that is effective and efficient.  The research, led by Sifei Liu and Xueting Li from NVIDIA, analyzed arbitrary style transfer algorithms and their extensions. They concluded that although the current algorithms perform well, they fail to explore the entire solution of transformation matrices, and have limited capability of generalizing to more applications, such as photo-realistic and video stylization.

The research, led by Sifei Liu and Xueting Li from NVIDIA, analyzed arbitrary style transfer algorithms and their extensions. They concluded that although the current algorithms perform well, they fail to explore the entire solution of transformation matrices, and have limited capability of generalizing to more applications, such as photo-realistic and video stylization.

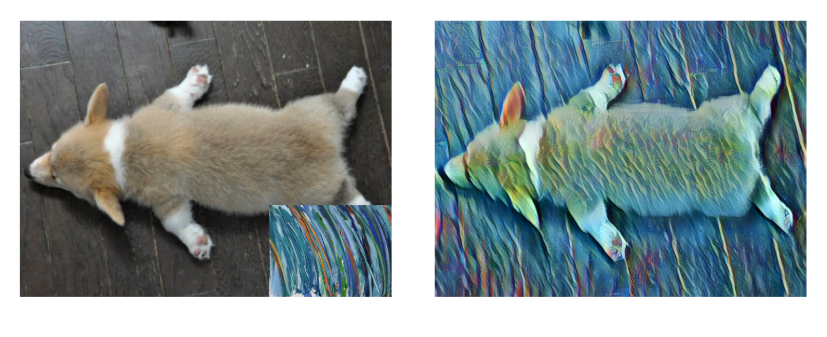

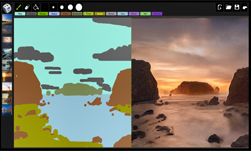

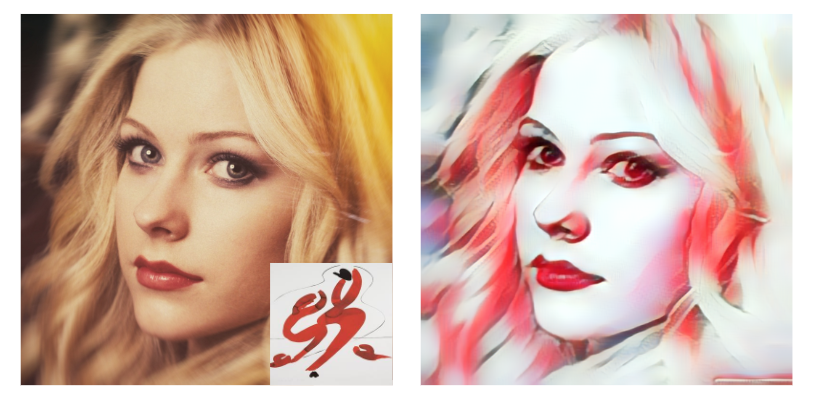

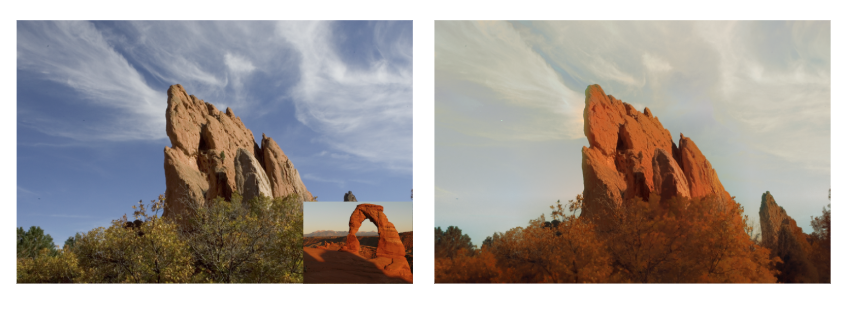

To demonstrate the effectiveness of the algorithm, the researchers tested their approach on four style transfer tasks: artistic style transfer, video and photo-realistic style transfer, and domain adaptation.

To demonstrate the effectiveness of the algorithm, the researchers tested their approach on four style transfer tasks: artistic style transfer, video and photo-realistic style transfer, and domain adaptation.

“Our algorithm is computationally efficient, flexible for numerous styles, and effective for stylizing images and videos,” the researchers stated in their paper. “Usually people only use style transfer for artistic purposes, but now people can use this model to achieve photorealistic results,” Liu explained.

Using NVIDIA TITAN Xp GPUs and cuDNN-accelerated PyTorch deep learning framework the researchers trained a convolutional neural network on 80,000 images of people, scenery, animals, and moving objects. The photos came from the WikiArt and the MS-COCO datasets.

“Our algorithm is highly efficient yet allows a flexible combination of multi-level styles while preserving content affinity during style transfer process,” the researchers said.

“Our algorithm is highly efficient yet allows a flexible combination of multi-level styles while preserving content affinity during style transfer process,” the researchers said.

At the crux of this work is the implementation of an algorithm that uses linear style transfer. This allows two light-weighted convolutional neural networks to replace any GPU-unfriendly computations, such as SVD decomposition, and to transform the images. Because of this, users can apply different levels of style changes in real time.

At the crux of this work is the implementation of an algorithm that uses linear style transfer. This allows two light-weighted convolutional neural networks to replace any GPU-unfriendly computations, such as SVD decomposition, and to transform the images. Because of this, users can apply different levels of style changes in real time.

“Our solution also allows people to alter a video in real time. You can use numerous patterns to find the style that works best you,” Liu explained.

“Experimental results demonstrate that the proposed algorithm performs favorably against many state-of-the-art methods on style transfer of images and videos,” the team explained in the paper.

“I think this will encourage content producers to create more, maybe people who aren’t good at painting will use style transfer to create art,” said Liu. “I hope real-time arbitrary style transfer becomes more prominent in real-life applications. Imagine if you could put it on a VR headset, and implement it in real-time rendering,” she added.

“I think this will encourage content producers to create more, maybe people who aren’t good at painting will use style transfer to create art,” said Liu. “I hope real-time arbitrary style transfer becomes more prominent in real-life applications. Imagine if you could put it on a VR headset, and implement it in real-time rendering,” she added. The research was shown at SIGGRAPH in Vancouver, Canada this week.

The research was shown at SIGGRAPH in Vancouver, Canada this week.Read more >