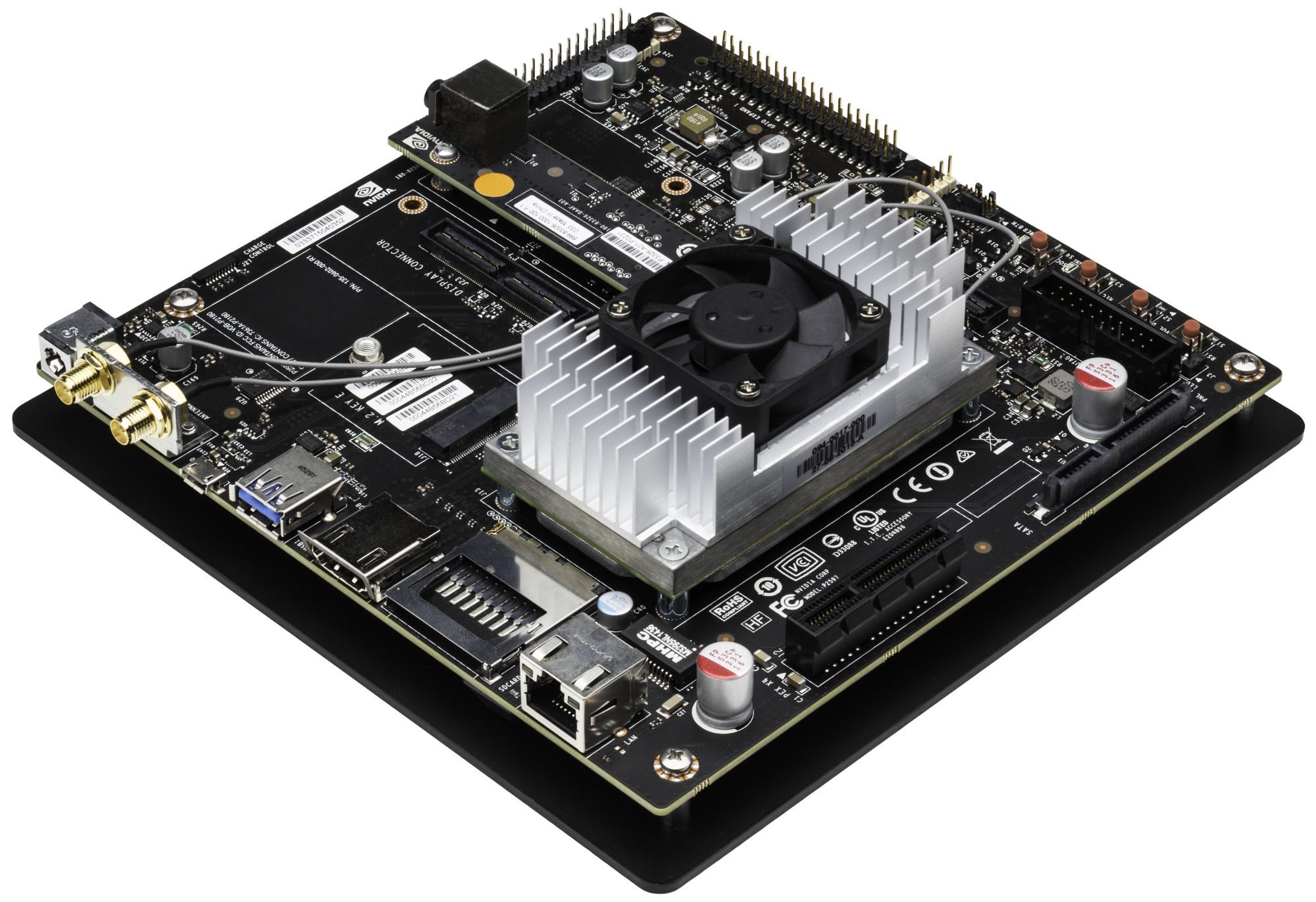

Today NVIDIA released a major update of the JetPack SDK with new developer tools and libraries that doubles the performance of deep learning applications on the Jetson TX1 Developer Kit, the world’s highest performance platform for deep learning on embedded systems.

JetPack 2.3 is available as a free download and is focused on making it easier for developers to add complex AI and deep learning capabilities to intelligent machines. This update includes the new TensorRT deep learning inference engine, the latest versions of CUDA 8 and cuDNN 5.1, and tighter camera and multimedia integration to easily add complex AI and deep learning abilities to intelligent machines.

In addition, NVIDIA announced a new partnership with Leopard Imaging Inc., a Jetson Preferred Partner that specializes in the creation of camera solutions. The new camera API included in the JetPack 2.3 release delivers enhanced functionality to ease developer integration.

Download JetPack 2.3 today.

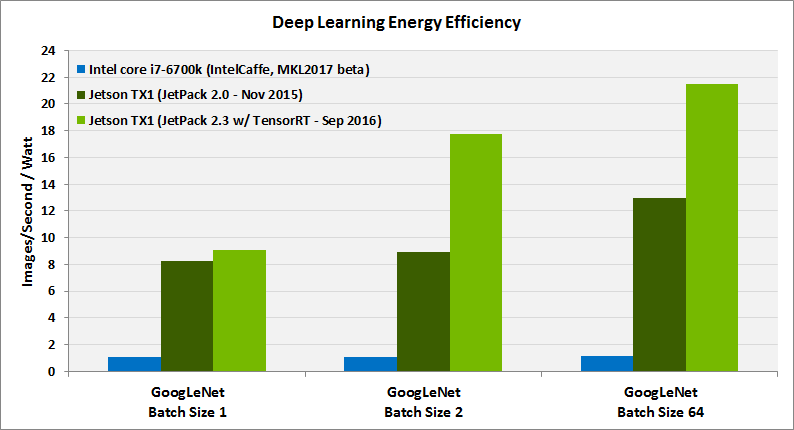

Chart footnotes:

- The efficiency was measured using the methodology outlined in the whitepaper.

- Jetson TX1 efficiency is measured at GPU frequency of 691 MHz.

- Intel Core i7-6700k efficiency was measured for 4 GHz CPU clock.

- GoogLeNet batch size was limited to 64 as that is the maximum that could run with Jetpack 2.0. With Jetpack 2.3 and TensorRT, GoogLeNet batch size 128 is also supported for higher performance.

- FP16 results for Jetson TX1 are comparable to FP32 results for Intel Core i7-6700k as FP16 incurs no classification accuracy loss over FP32.

- Latest publicly available software versions of IntelCaffe and MKL2017 beta were used.

- For Jetpack 2.0 and Intel Core i7, non-zero data was used for both weights and input images. For Jetpack 2.3 (TensorRT) real images and weights were used.