Today at the Computer Vision and Pattern Recognition (CVPR) conference, we’re making the release candidate Kubernetes on NVIDIA GPUs freely available to developers for feedback and testing.

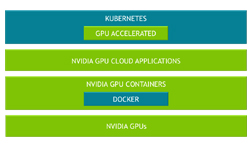

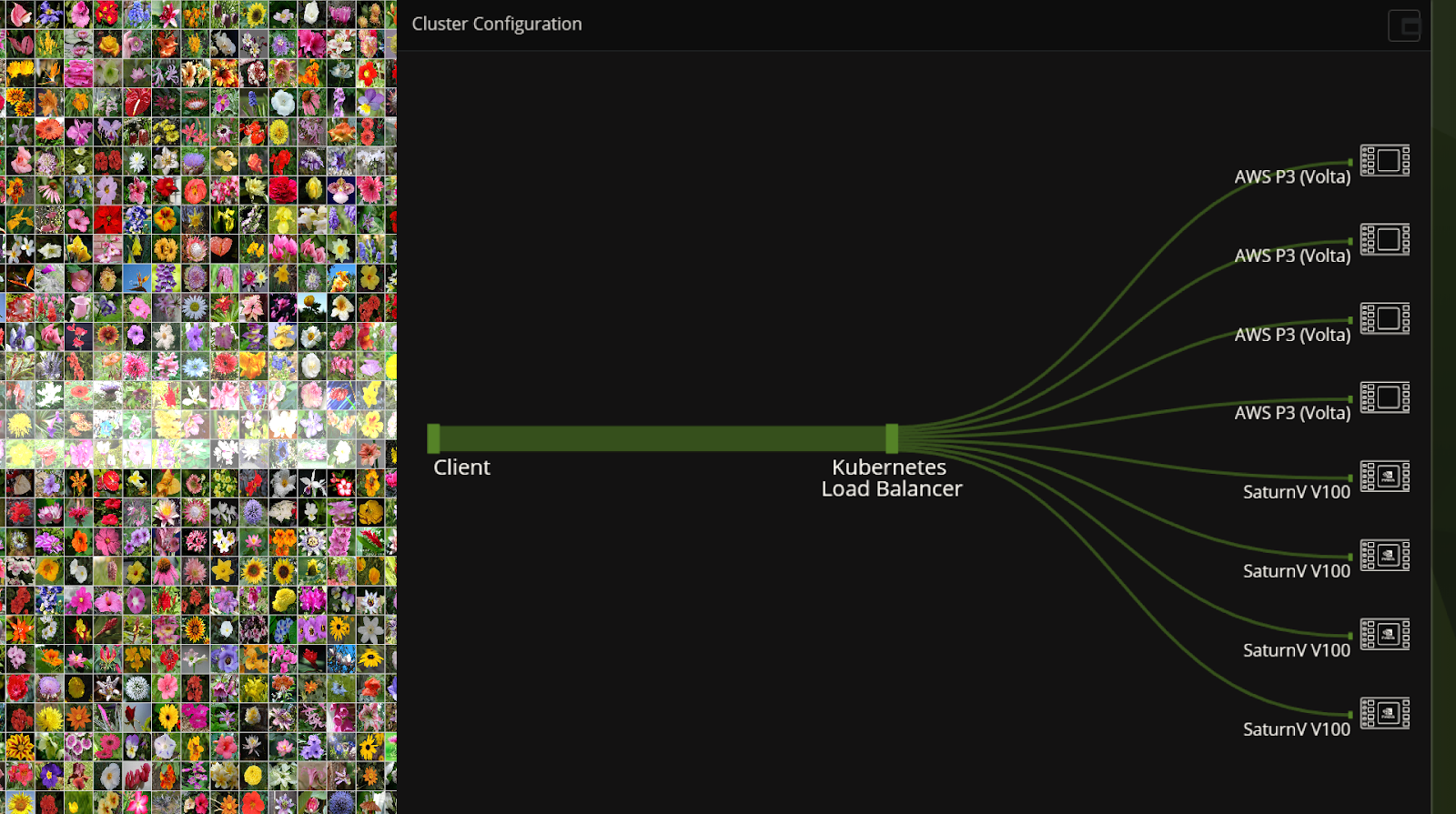

Kubernetes on NVIDIA GPUs enables enterprises to scale up training and inference deployment to multi-cloud GPU clusters seamlessly. It lets you automate the deployment, maintenance, scheduling and operation of multiple GPU accelerated application containers across clusters of nodes.

With increasing number of AI powered applications and services and the broad availability of GPUs in public cloud, there is a need for open-source Kubernetes to be GPU-aware. With Kubernetes on NVIDIA GPUs, software developers and DevOps engineers can build and deploy GPU-accelerated deep learning training or inference applications to heterogeneous GPU clusters at scale, seamlessly.

Key benefits include:

- Orchestrate resources on heterogeneous GPU clusters

- Optimize GPU cluster utilization with active health monitoring

- Tested, validated and maintained by NVIDIA

Learn more >