Today at the Computer Vision and Pattern Recognition Conference in Salt Lake City, Utah, NVIDIA is kicking off the conference by demonstrating an early release of Apex, an open-source PyTorch extension that helps users maximize deep learning training performance on NVIDIA Volta GPUs.

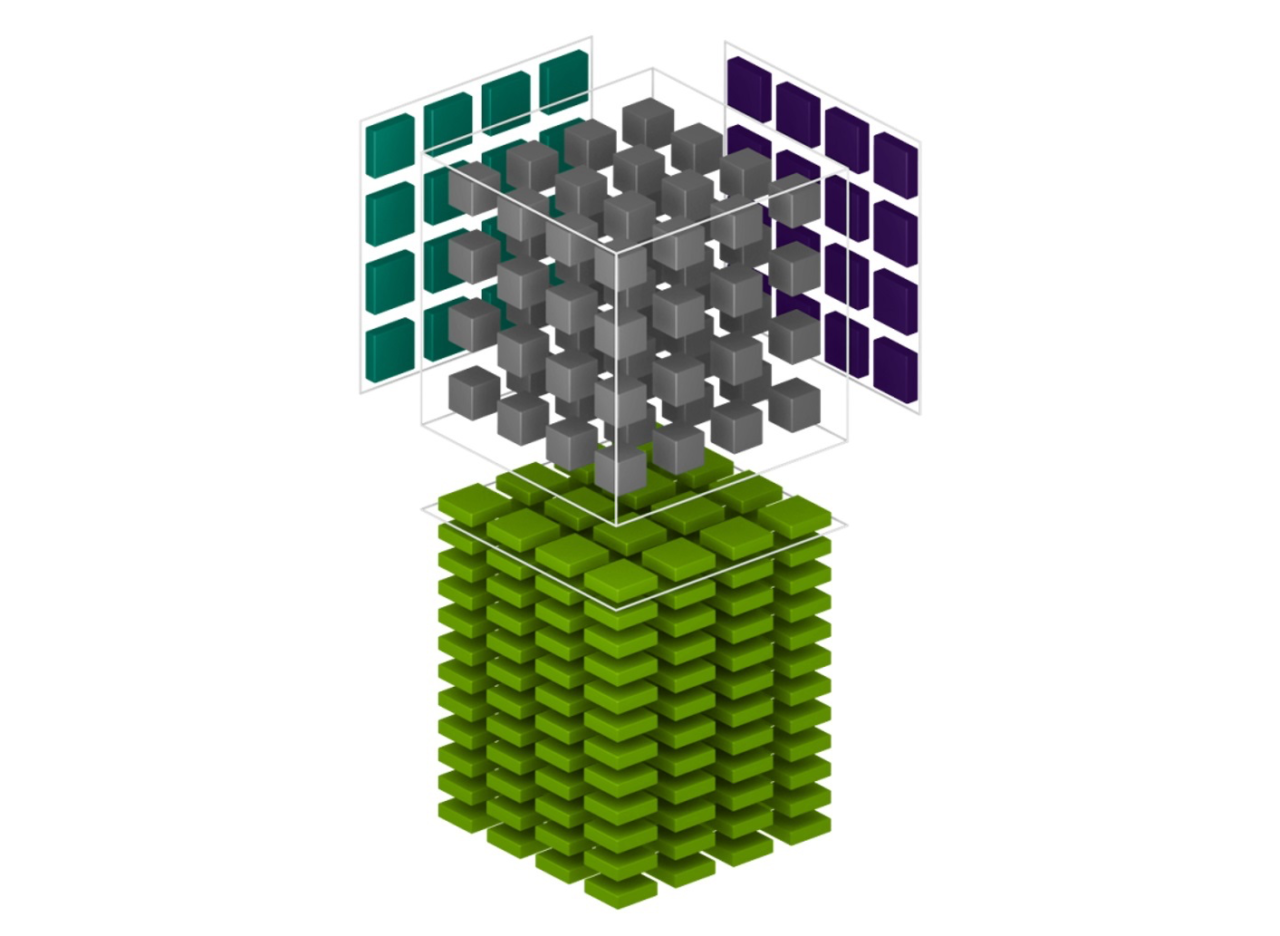

Inspired by state-of-the-art mixed precision training in translational networks, sentiment analysis, and image classification, NVIDIA PyTorch developers have created tools bringing these methods to all levels of PyTorch users. Mixed precision utilities in Apex are designed to improve training speed while maintaining the accuracy and stability of training in single precision. Specifically, Apex offers automatic execution of operations in either FP16 or FP32, automatic handling of master parameter conversion, and automatic loss scaling, all available with 4 or fewer line changes to the existing code.

Installation requires CUDA 9, PyTorch 0.4 or later, and Python 3. The modules and utilities are still under active development and we look forward to your feedback to make these utilities even better. Users can download the code and get started with documentation, tutorials, and examples on our GitHub page. You can also learn more about some of the technical details behind mixed precision in the GTC 2018 session: Training Neural Networks with Mixed Precision: Real Examples.

Read more >

Introducing Apex: PyTorch Extension with Tools to Realize the Power of Tensor Cores

Jun 19, 2018

Discuss (0)

Related resources

- GTC session: State of PyTorch

- GTC session: Lightning Fast with Thunder, a New Extensible Deep Learning Compiler for PyTorch

- NGC Containers: PyTorch Lightning

- SDK: NVIDIA PyTorch

- SDK: JAX

- SDK: PyTorch Geometric(PyG) Container