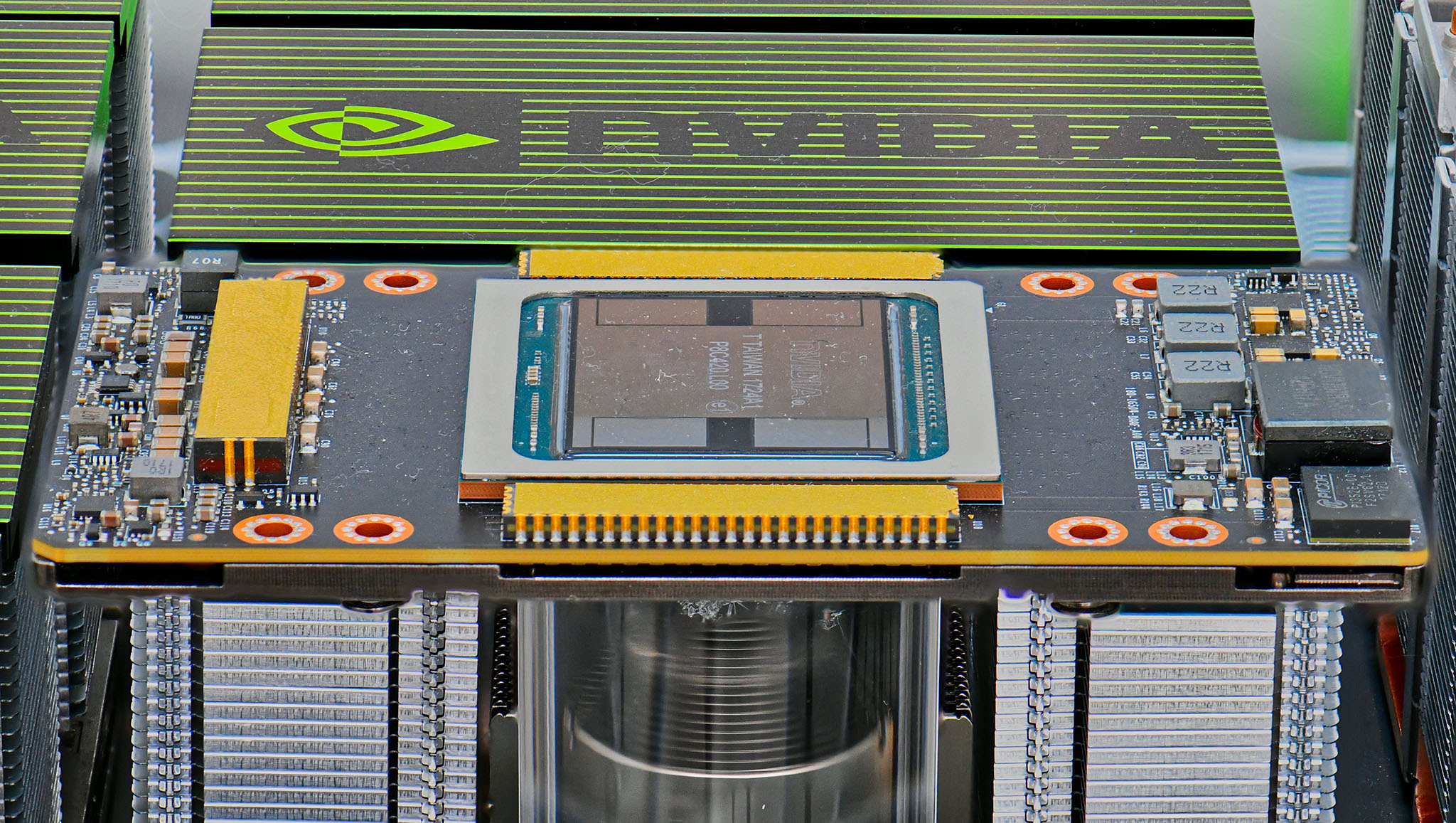

At this week’s Computer Vision and Pattern Recognition conference, NVIDIA demonstrated how one Tesla V100 running NVIDIA TensorRT can perform a common inferencing task 100X faster than a system without GPUs.

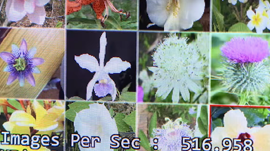

In the video below, the CPU-only Intel Skylake-based system (on the left) can classify five flower images per second with a Resnet-152 trained classification network. That’s a speed that comfortably outpaces human capability.

By contrast, a single V100 GPU (on the right) can classify a dizzying 527 flower images per second, returning results with less than 7 milliseconds of latency — a superhuman feat.

While a 100X speed up in performance is impressive, that’s only half the equation. What are the costs associated with moving as fast as possible — what we here at NVIDIA call “speed of light”?

Remarkably, moving faster means fewer costs. One NVIDIA GPU-enabled system doing the same work as 100 CPU-only systems means 100 times fewer cloud servers to rent or buy.

NVIDIA TensorRT is available to members of the NVIDIA Developer Program as a free download to speed up AI inference on NVIDIA GPUs in the data center, in automobiles and in robots, drones and other devices at the edge.

Read more >

Related resources

- GTC session: Scaling Generative AI Features to Millions of Users Thanks to Inference Pipeline Optimizations

- GTC session: Optimizing Inference Performance and Incorporating New LLM Features in Desktops and Workstations

- GTC session: Speeding up LLM Inference With TensorRT-LLM

- SDK: cuTENSORMg

- SDK: cuTENSOR

- Webinar: Accelerated Creative AI – Using NVIDIA-optimized image generation for Media and Entertainment