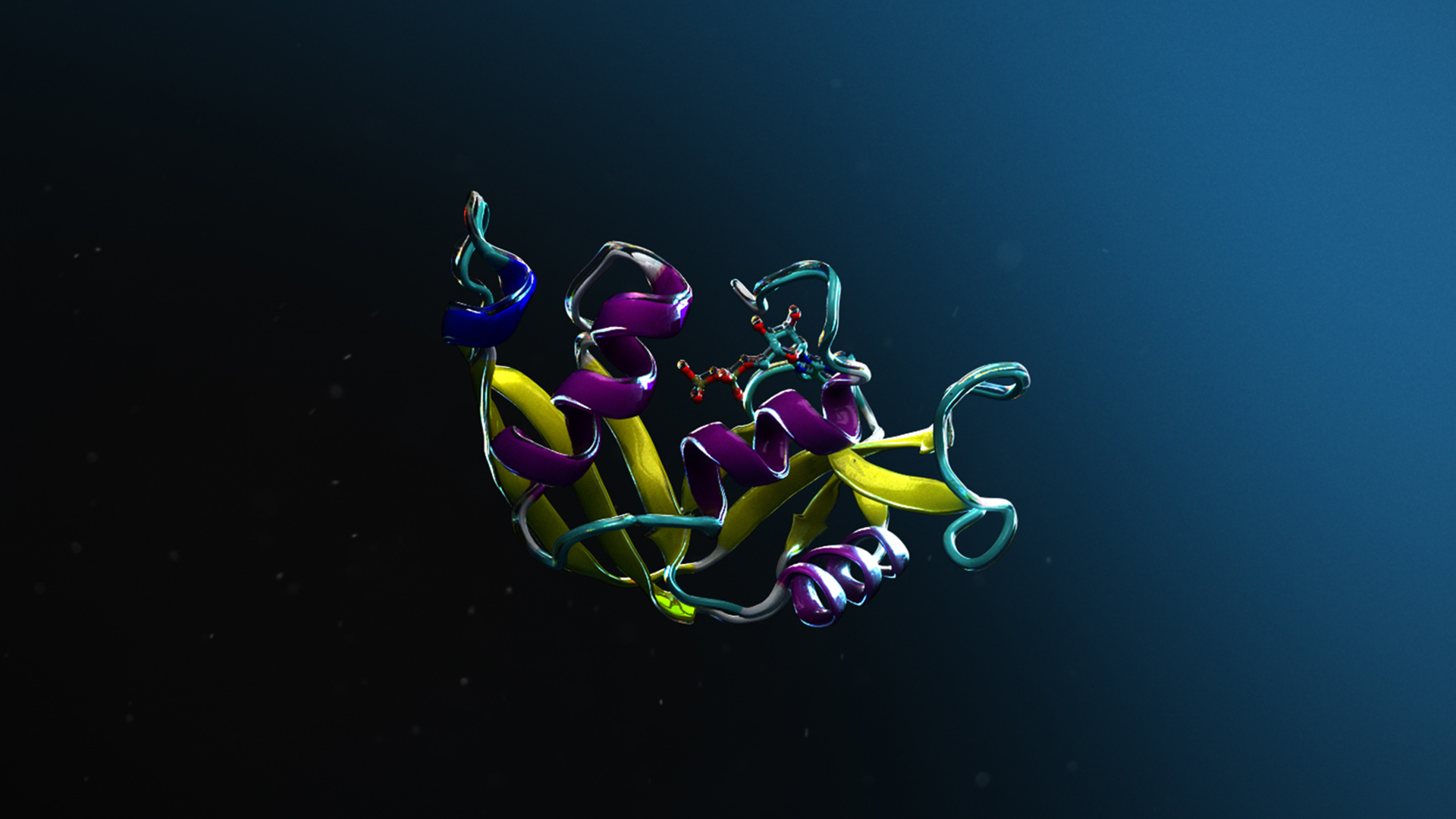

New York City-based startup TheTake, a member of the NVIDIA Inception program, recently unveiled a new deep learning-based algorithm that can automatically decode what a celebrity, athlete, or other public figure is wearing in a video in near real time.

“TheTake’s mission is simple: making media content shoppable,” said Jared Browarnik, the company’s Co-founder and Chief Technology Officer. “The deep learning technology we use is incredibly complex – it takes a concert of machine learning models working in tandem to accomplish that mission. And only by leveraging the power of NVIDIA’s GPUs are we able to train and deploy our models quickly and efficiently.”

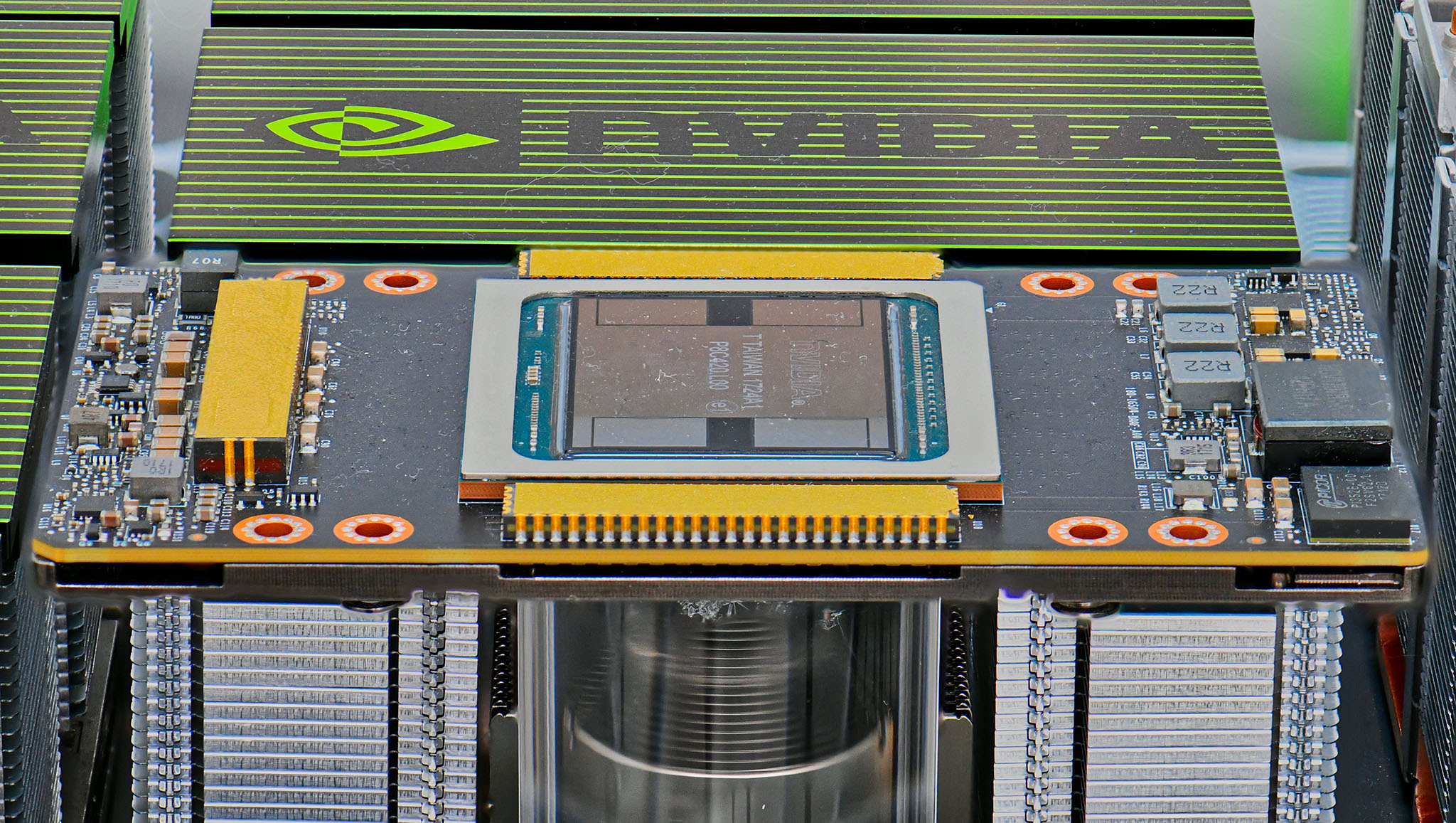

Using NVIDIA Tesla GPUs on the Amazon Web Services cloud, with the cuDNN-accelerated Caffe and TensorFlow deep learning frameworks, and mixed precision FP16, the company trained a deep neural network on millions of images from their own human-curated dataset of movies and shows.

Once deployed, TheTake runs their models on NVIDIA V100 GPUs on the Amazon Web Services (AWS) cloud.

“We use NVIDIA GPUs on AWS for inference, which is significantly more cost effective than the alternative of running on a CPU,” Browarnik said. We’re also required to support use cases that necessitate near real-time inference, which would be nearly impossible without the speed of running on a GPU.”

What makes the system unique is that it can be integrated into smart tv and satellite and cable provider set-top boxes, allowing millions of viewers to access the deep learning-based system. The tool can also be added to mobile-apps.

Right now, The Take’s database includes over 80 million items that the system can recognize.