NVIDIA announced that Facebook will accelerate its next-generation computing system with the NVIDIA Tesla Accelerated Computing Platform which will enable them to drive a broad range of machine learning applications.

Facebook is the first company to train deep neural networks on the new Tesla M40 GPUs – introduced last month – this will play a large role in their new open source “Big Sur” computing platform, Facebook AI Research’s (FAIR) purpose-built system designed specifically for neural network training.

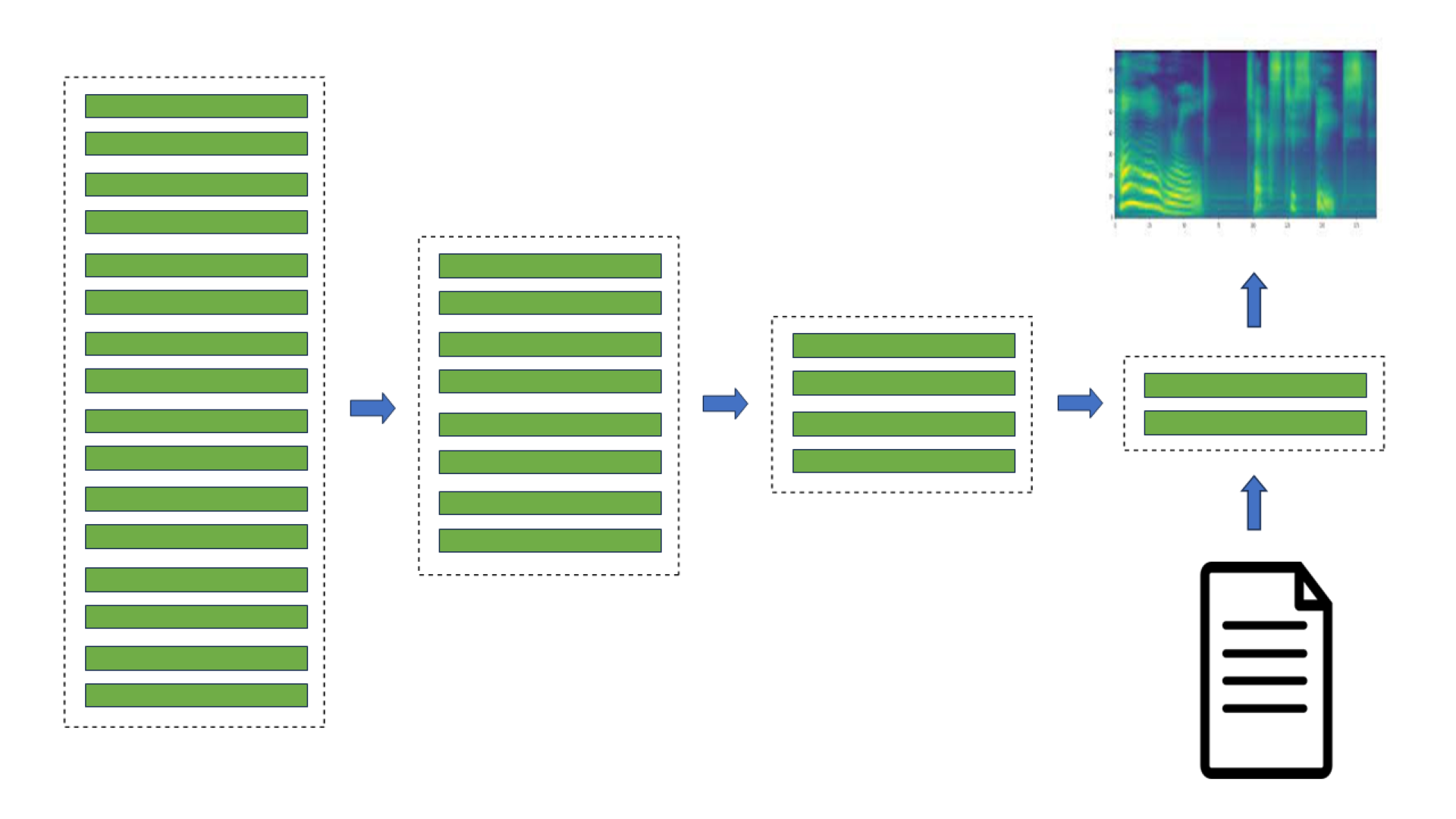

Training the sophisticated deep neural networks that power applications such as speech translation and autonomous vehicles requires a massive amount of computing performance.

With GPUs accelerating the training times from weeks to hours, it’s not surprising that nearly every leading machine learning researcher and developer is turning to the Tesla Accelerated Computing Platform and the NVIDIA Deep Learning software development kit.

A recent article on WIRED explains how GPUs have proven to be remarkably adept at deep learning and how large web companies like Facebook, Google and Baidu are shifting their computationally intensive applications to GPUs.

The artificial intelligence is on and it’s powered by GPU-accelerated machine learning.

Read more on the NVIDIA blog >>

How GPUs are Revolutionizing Machine Learning

Dec 10, 2015

Discuss (0)

Related resources

- GTC session: How OCI and NVIDIA can Power Generative AI and LLMs (Presented by Oracle)

- GTC session: Training Deep Learning Models at Scale: How NCCL Enables Best Performance on AI Data Center Networks

- GTC session: Why Hardware Companies Need to Be Software Companies, Too (Presented by CoreWeave)

- SDK: RAPIDS Accelerator for Spark

- Webinar: Accelerate AI Model Inference at Scale for Financial Services

- Webinar: How to Optimize AI Models for Faster Inference