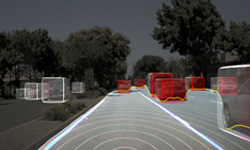

Autonomous vehicles are complex, requiring fast and accurate perception of their surroundings to make decisions in real-time. This capability calls for high-performance AI compute to enable the wide range of tasks required for autonomous driving, including multi-stage sensor data processing, and obstacle detection as well as traffic sign and lane recognition.

With the CUDA toolkit and TensorRT inference runtime, autonomous vehicles running on the NVIDIA DRIVE AGX platform can achieve the critical latency requirements for autonomous driving. Developers can learn how to optimize their application using this high-performance hardware and software combination in an upcoming three-part webinar series beginning later this month.

Optimizing DNN Inference Using CUDA and TensorRT on NVIDIA DRIVE AGX

- Date: Tuesday, October 22, 2019

- Time: 9:00 AM PDT/ 6:00 PM PDT

- Register here

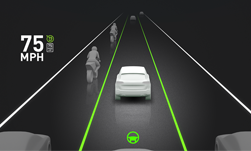

A vehicle traveling at 65 mph will cover nearly 100 feet per second. At highway speeds, a delay of a fraction of a second in perception and decision making can potentially have severe consequences.

Driving at any speed, autonomous vehicles must be able to process potentially multiple gigabytes of perception data per second from a variety of sensors and respond to it in real time to drive safely.

Core Compute

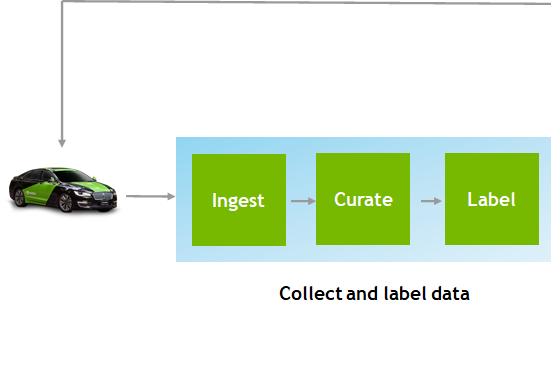

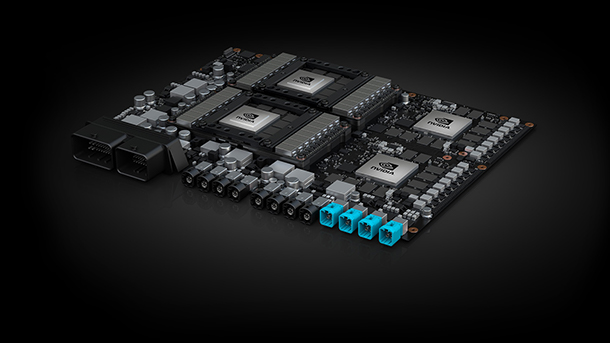

The scalable DRIVE AGX platform provides high performance compute and the software stack for automated or autonomous driving applications, from Level 2+ assisted driving to fully driverless robotaxis.

At the core of DRIVE AGX is the Xavier system-on-a-chip, the world’s first processor for autonomous driving and most complex SoC ever created. Its 9 billion transistors enable Xavier to process vast amounts of driving data.

Software Speed Up

Software makes up the other half of the autonomous driving equation. NVIDIA DRIVE Software is a full software stack for self-driving cars. The platform is open, enabling developers to integrate their own applications into the software solution.

Within the software stack, NVIDIA has developed a suite of tools for applications to fully utilize compute capabilities of Xavier and achieve the latency and throughput requirements for autonomous driving.

CUDA is a parallel computing platform and programming model developed by NVIDIA for general-purpose computing on GPUs. CUDA supports aarch64 (the 64-bit state introduced in the armv8-A architecture) platforms, and with parallelizable compute workloads, developers can achieve dramatic speedups on NVIDIA DRIVE AGX.

In addition to CUDA, NVIDIA TensorRT is a platform for high-performance deep learning inference. It includes a hardware aware deep learning inference optimizer and runtime that delivers low latency and high-throughput for deep learning inference applications. On NVIDIA DRIVE AGX, TensorRT can target specialized Deep Learning Accelerators on the Xavier SoC.

The combination of CUDA and TensorRT enables DNNs running on the vehicle to process sensor inputs at high speeds. This makes it possible for vehicles to process data from a variety of sensors in real time for Level 4 and Level 5 autonomous driving capability, which requires no supervision from a human driver.

To learn more about optimizing DNN inference using CUDA and TensorRT on DRIVE AGX, register for the first webinar in the series on Oct. 22 here.