Last Thursday at the International Conference on Machine Learning (ICML) in New York, Facebook announced a new piece of open source software aimed at streamlining and accelerating deep learning research. The software, named Torchnet, provides developers with a consistent set of widely used deep learning functions and utilities. Torchnet allows developers to write code in a consistent manner speeding development and promoting code re-use both between experiments and across multiple projects.

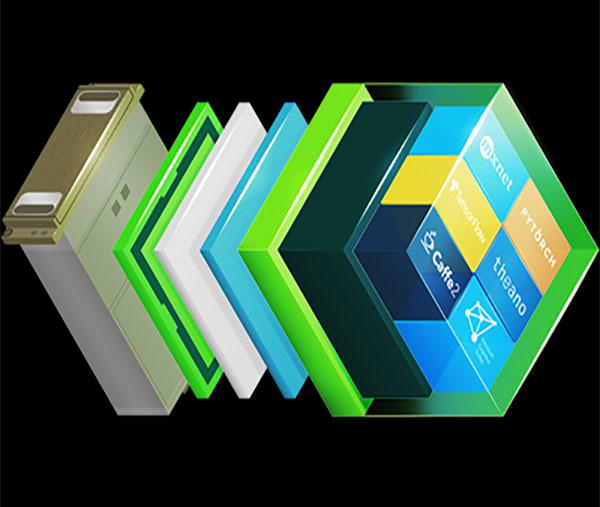

Torchnet sits atop the popular Torch deep learning framework benefits from GPU acceleration using CUDA and cuDNN. Further, Torchnet has built-in support for asynchronous, parallel data loading and can make full use of multiple GPUs for vastly improved iteration times. This automatic support or multi-GPU training helps Torchnet take full advantage of powerful systems like the NVIDIA DGX-1 with its eight Tesla P100 GPUs.

According to the Torchnet research paper, its modular design makes it easy to re-use code in a series of experiments. For instance, running the same experiments on a number of different datasets is accomplished simply by plugging in different dataloaders. And the evaluation criterion can be changed easily by plugging in a different performance meter.

Torchnet adds another powerful tool to data scientists’ toolkit and will help speed the design and training of neural networks, so they can focus on their next great advancement.

Read more >>

Facebook and CUDA Accelerate Deep Learning Research

Jun 30, 2016

Discuss (0)

Related resources

- GTC session: Priming Researchers and Students for AI and Accelerated Computing Breakthroughs With Self-Sustaining Training Programs

- GTC session: Training Deep Learning Models at Scale: How NCCL Enables Best Performance on AI Data Center Networks

- GTC session: Recommender Systems 101: Accelerating Training at Scale

- SDK: NVIDIA PyTorch

- SDK: CV-CUDA

- SDK: cuDNN