On Tuesday, March 21st at 2:40pm, NVIDIA will host “Introduction to DirectX Raytracing,” a three hour tutorial session. Chris Wyman, Adam Mars, and Juha Sjoholm will provide GDC attendees with an overview on how to integrate ray tracing into existing raster applications.

We asked Chris about his history with ray tracing, and what he hopes attendees will learn from the session.

What excites you about real-time ray tracing?

What doesn’t excite me about real-time ray tracing? I’ve spent most of my career thinking up approximate raster algorithms for lighting effects that could easily be accomplished with ray tracing. There have been so many times in my research where I’ve thought, “wouldn’t this be easier if I had a fast point-to-point visibility query?”

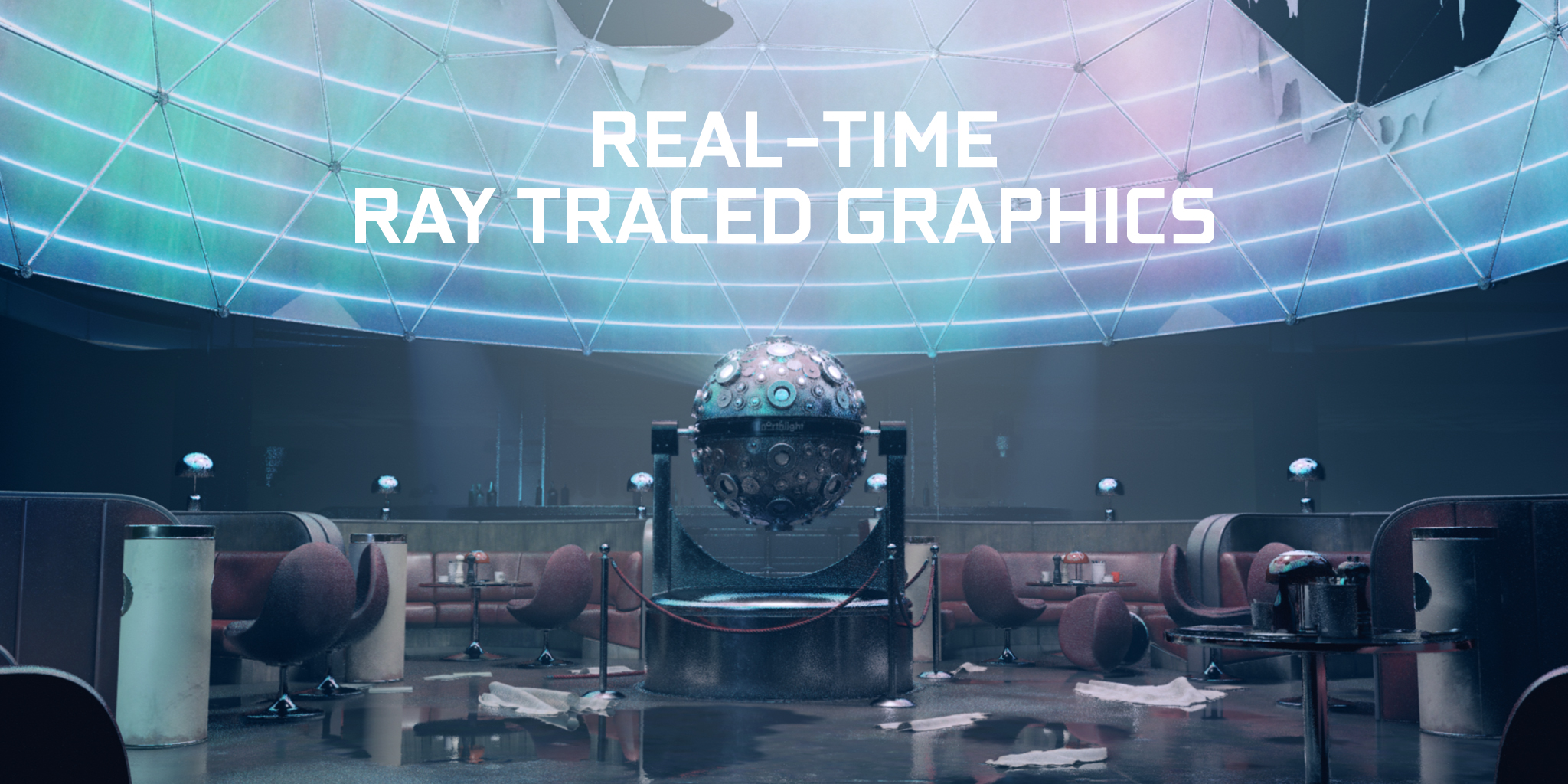

Recursive ray tracing, as popularly envisioned and frequently learned by students in introductory graphics courses, clearly provides quality improvements with accurate reflections, refractions, shadows, and the like. But fundamentally, the difference between rasterization and ray tracing is whether you can perform individual point-to-point visibility queries or if you need to batch up coherent queries from a common origin to pass to your rasterizer. But batching and spawning rasterization has a significant overhead; you need batches of tens or hundreds of thousands of queries before rasterization makes much sense. With real-time ray tracing you can make incoherent queries, from different origins in different directions, and still get good performance. This opens a ton of new possible rendering algorithms as we go back to rethink the rendering pipeline.

Why do you feel the time has come for developers to add ray tracing to their pipeline?

This one is simple: it’s now easy to integrate and experiment.

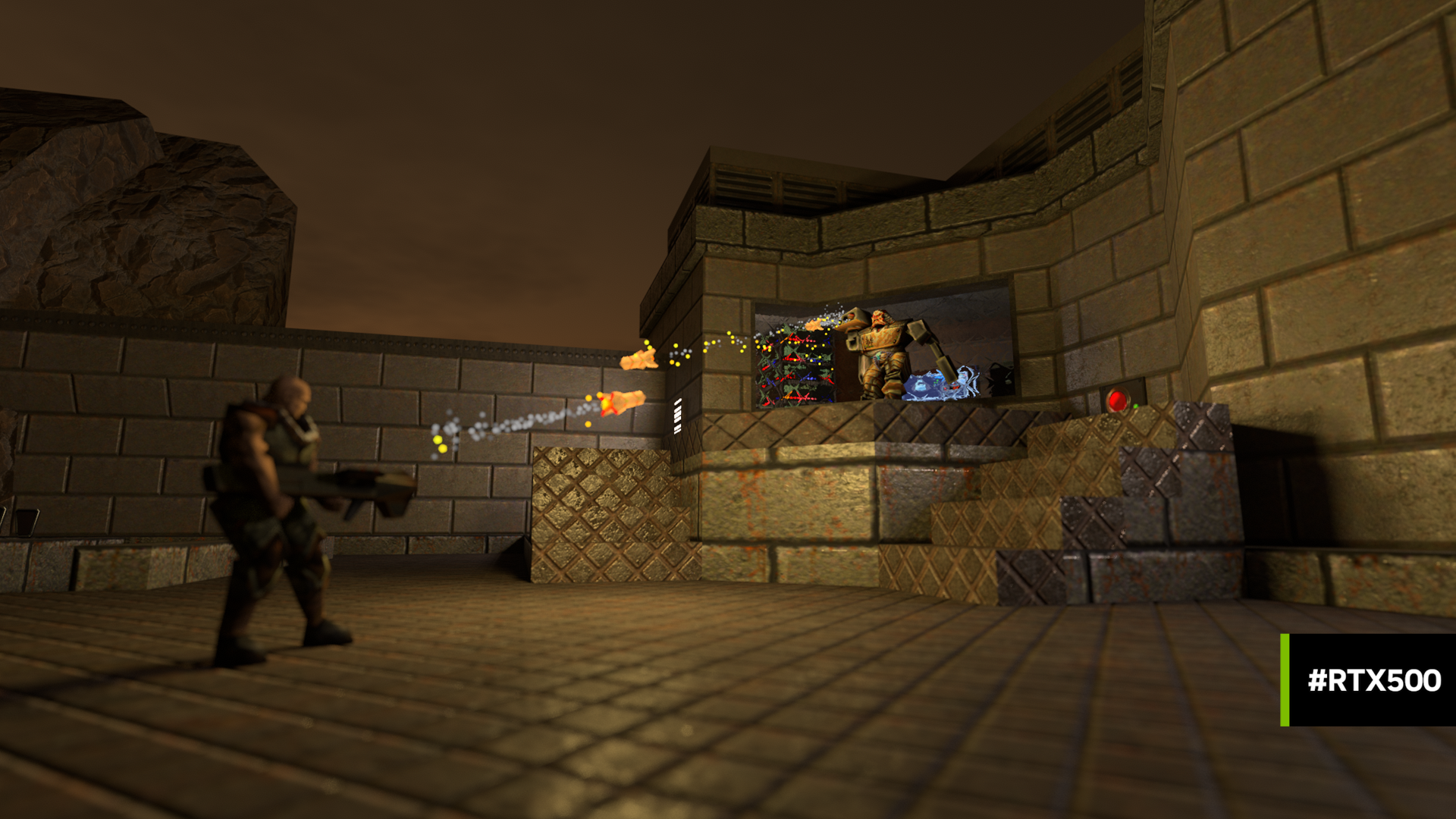

Let’s face it, depending on your definition, “real-time” ray tracing has been around using GPUs for over 15 years. Purcell et al.’s “Ray tracing on programmable graphics hardware” (among many other papers) showed real time ray tracing in the early 2000s. And numerous companies, developers, and graduate students have been doing GPU ray tracing ever since. Examples range from NVIDIA’s OptiX to my Twitter timeline, which contains people implementing Pete Shirley’s “Ray Tracing in One Weekend” books in combinations of every conceivable language and hardware.

But to achieve high performance with a custom GPU ray tracer takes significant effort for both development, integration, and deployment. For a lot of R&D efforts, the return on investment wasn’t clear unless you already knew ray tracing was important. But knowing the benefits to your renderer is impossible without access to a high-performance ray tracer. The classic chicken and egg problem.

Today, we have DirectX with ray tracing support and hardware to accelerate it. This allows anyone to experiment with integrating a high-performance ray tracer into their engine for just the price of a GPU. If you want to ask, “can I use ray tracing for this,” you can now experiment directly in Unreal. If you’re a student or researcher looking to try out ray tracing, our research team’s development environment, Falcor, seamlessly integrates DX raster and ray tracing support and is where I do all my experimental prototyping.

I think with seamless integration, we’ll start seeing numerous new and interesting ways ray tracing improves rendering quality and performance. As food for thought: foveation might allow focusing computation based on user gaze direction and denoising could avoid redundant computations that simple filters can reconstruct.

What are you hoping developers take away from the GDC training session on DXR?

This tutorial has a couple purposes.

I’ll start with a quick refresher of basic ray tracing concepts, in case you haven’t thought seriously about ray tracing since you left school. Then I’ll talk about the changes DirectX Raytracing makes to the on-chip graphics pipeline: the new shaders stages for launching and processing rays, the new intrinsics added to HLSL, and the mental model for how rays get processed within DirectX. We’ve got some simple but concrete examples to make sure this process is clear. This part of the tutorial should bring you up to speed on ray tracing and, given an existing software package with DXR integrated, enable you to write shaders that trace rays.

Adam is going to cover low-level DirectX host code, in case you need to understand what is necessary to integrate ray tracing into an existing DirectX engine. There’s a bunch of new concepts to understand, including building ray acceleration structures, ray tracing pipeline state objects, shader tables, and changes to DX root signatures.

Juha will talk about lessons learned while working with Remedy Entertainment to integrate ray tracing into Control. This includes a variety of best practices and pitfalls to avoid when integrating into existing engines with today’s hardware. While DirectX abstracts many aspects of ray tracing, as with any abstraction it also allows developers to fall off the fast path. If you’re (thinking of) doing ray tracing in your engine, hopefully you can learn how to avoid known performance pitfalls.

Which industries outside of video games stand to benefit from real-time ray tracing? How?

People use GPUs in countless industries, including film rendering, architectural design, physical simulations of all kinds, art, and various kinds of scientific and information visualization. In some of these industries, ray tracing is already widely used. Here, new hardware acceleration may give a performance boost or reduce maintenance costs on home-grown low-level GPU ray tracers. In others, fast ray tracing may provide new options for quality and efficiency improvements.

All GDC attendees are invited to attend this session.

Title: Introduction to DirectX Raytracing

Date: Tuesday, March 19, 2019

Time: 2:40pm – 5:40pm

GDC Location: Room 205, South Hall