Developing software capable of driving a car without a human requires tools capable of meeting any possible challenge.

On May 5, NVIDIA will host a webinar demonstrating how developers can take advantage of the NVIDIA DriveWorks SDK to perform inference for safer, more efficient self-driving.

The flexible and modular SDK allows developers to bypass many of the bottlenecks that come with making the individual components of self-driving software work cohesively.

Specifically, DriveWorks simplifies the process of performing inference in a self-driving car. Inference is the process of running deep neural networks in real-time to extract insights from enormous amounts of data.

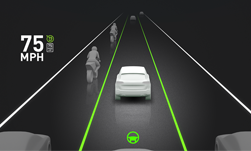

Autonomous vehicles run DNNs to perceive a vehicle’s environment via various sensors, generating massive amounts of data. These DNNs must be able to analyze key data in real time to perform redundant and diverse functions, such as identifying intersections and classifying drivable paths.

What is DriveWorks?

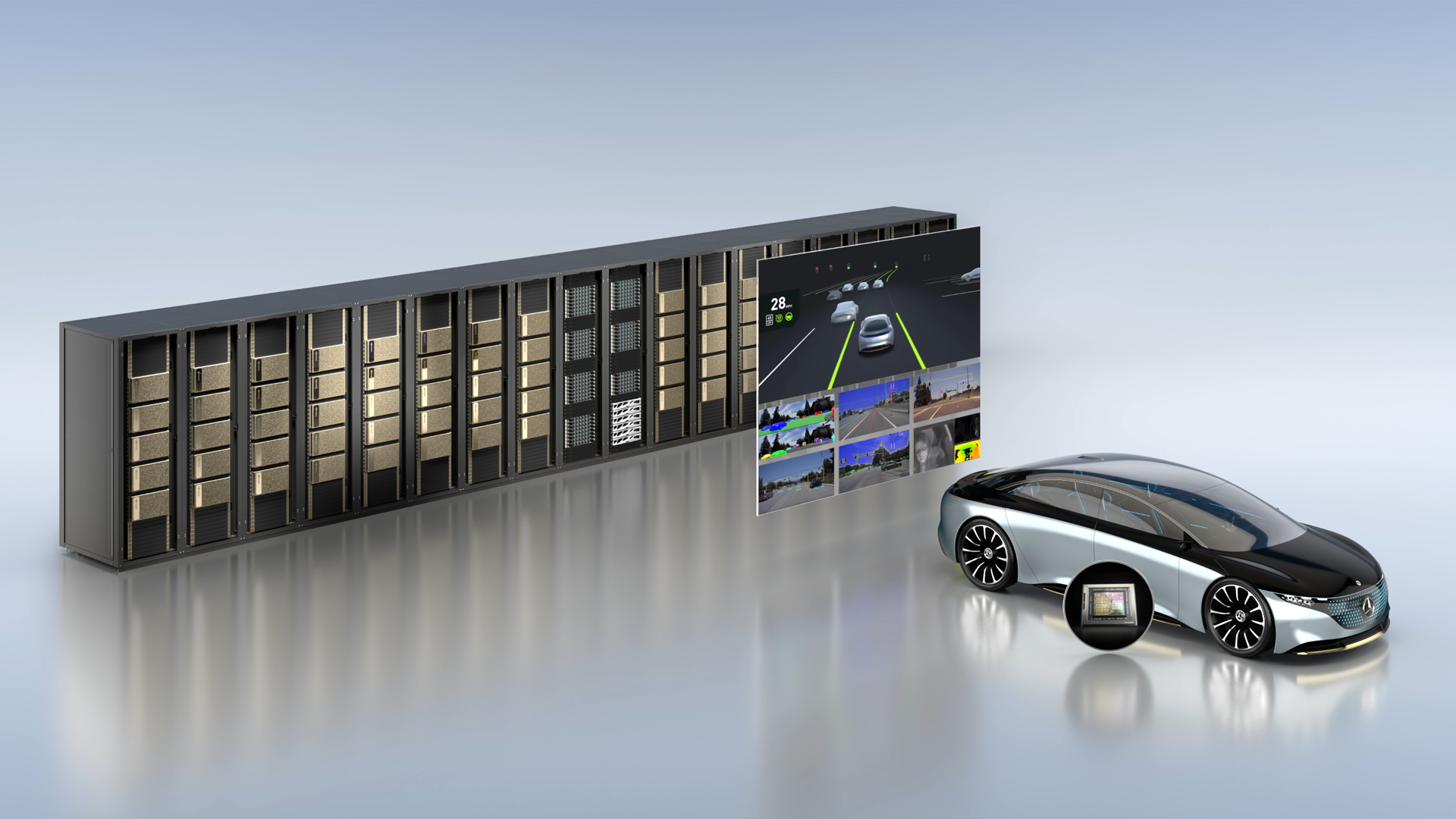

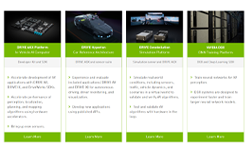

DriveWorks is middleware for autonomous vehicle software development. It provides a set of highly optimized modules that a developer can integrate into their application, as well as a broad set of tools covering everything from data recording, to sensor calibration, to DNN optimization.

The SDK is open and modular, so developers can pick and choose which components they want to use.

When running in the vehicle, DriveWorks abstracts away the details of the NVIDIA Xavier system-on-a-chip, while exposing its power, enabling developers to jumpstart development. It also provides a wide array of modules already optimized for the Xavier SoC.

The SDK’s sensor abstraction layer also makes it easy to integrate a new sensor into the software stack and minimizes rework in case developers need to switch sensor modules.

Improving Inference

The modularity and flexibility of DriveWorks makes it the optimal platform for developers to run autonomous vehicle inference.

It provides the infrastructure to optimize a pre-trained DNN with NVIDIA TensorRT, prepare input data, perform inference and post-process results. This infrastructure is generalized – it’s lightweight and repeatable to perform inference regardless of the use case.

The greatest advantage, however, is that DriveWorks saves development time when compared to using the base DRIVE OS infrastructure to perform inference. By making it easy to run on the NVIDIA Xavier SoC, the SDK enables developers to cut out time-consuming low-level programming and focus on the more complex algorithms.

In our upcoming webinar, you can learn more about the benefits of DriveWorks as well as how to implement the SDK to perform inference.