By: Berta Rodriguez Hervas

Editor’s note: This is the latest post in our NVIDIA DRIVE Labs series, which takes an engineering-focused look at individual autonomous vehicle challenges and how NVIDIA DRIVE addresses them. Catch up on all of our automotive posts.

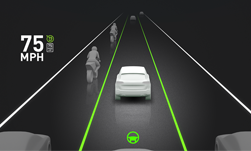

One of the first lessons in driving is simple: Green means go, red means stop. Self-driving cars must learn the same principles, as well as accurately identifying and responding to traffic signs and lights in any environment in which they operate.

To achieve this goal, the NVIDIA DRIVE AV software relies on a combination of DNNs to detect and classify traffic signs and lights. Specifically, we leverage our WaitNet DNN for intersection detection, traffic light detection and traffic sign detection. Our LightNet DNN then classifies traffic light shape (for example, solid or arrow) and state (color or red, yellow, or green), while the SignNet DNN identifies traffic sign type.

Collectively, these three DNNs form the core of our wait conditions perception software, designed to detect traffic conditions in which an autonomous car needs to slow to a stop and wait before proceeding further.

In our wait conditions perception architecture, traffic light and sign detection are separated from traffic light state/shape and traffic light type classification. The reason for this separation is the ability to optimize precision and recall for traffic light and sign detection, independently of classification performance. It also enables us to leverage WaitNet’s context understanding in the traffic light and sign detection process.

To perform traffic sign type classification, SignNet is designed as a convolutional DNN that classifies traffic sign type in a hierarchical manner for a large variety of traffic signs around the world. In a flat convolutional DNN model trained for classification, all the different potential output classes would have to be defined up front and a single classification output would have to be chosen for every frame. Given the complexity of traffic sign types found around the world, scaling such a model to cover all possible classes with strong precision/recall performance would be prohibitively difficult.

To manage this complexity and optimize performance, we leverage a hierarchical convolutional DNN model, in which exact output classes are not defined upfront. SignNet is trained to independently detect key features which are then combined into output classes based on the analysis of output results. By designing output classes through iterative analysis, it becomes possible to scale to a large number of classes while maintaining strong classification performance.

Our current SignNet DNN model coverage extends to 300 U.S. traffic sign types and 200 European traffic signs. These signs include highway signs, such as speed limits and highway zone delimiters (Figure 2), as well as signs needed for semi-urban to urban autonomous driving (for example, yield, right of way, and roundabout signs).

To perform traffic light shape and state classification, LightNet is designed as a multi-class, convolutional DNN. LightNet, SignNet, and WaitNet DNNs are available through NVIDIA DRIVE software releases and all feature open APIs. Further coverage extensions to additional traffic light and sign classes and additional geographies are planned for future releases.

To learn more, visit the NVIDIA DRIVE Networks page.