By: Jordan Marr, Yu Sheng, Amir Akbarzadeh

Editor’s note: This is the latest post in our NVIDIA DRIVE Labs series, which takes an engineering-focused look at individual autonomous vehicle challenges and how NVIDIA DRIVE addresses them. Catch up on all of our automotive posts, here.

Localization is a critical capability for autonomous vehicles, making it possible to pinpoint their location within centimeters inside a map.

This high level of accuracy enables a self-driving car to understand its surroundings and establish a sense of the road and lane structures. With localization, a vehicle can detect when a lane is forking or merging, plan lane changes and determine lane paths even when markings aren’t clear.

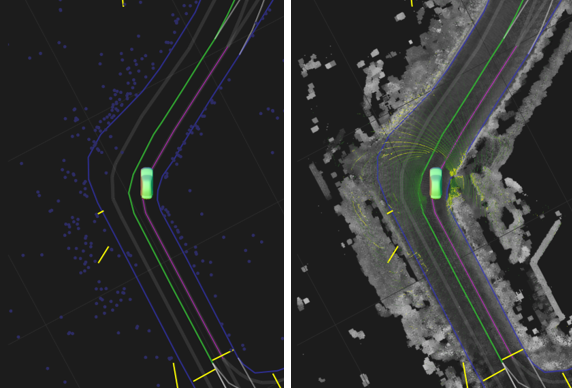

DRIVE Localization makes that precise positioning possible by matching semantic landmarks in the vehicle’s environment with features from HD maps to determine exactly where it is in real time. In this DRIVE Labs, we show how our localization algorithm makes it possible to achieve high accuracy and robustness using mass market sensors.

Navigating with Pinpoint Accuracy

Localization provides the 3D pose of a self-driving car inside a high-definition (HD) map, including 3D position, 3D orientation and their uncertainties. Unlike the use of a navigation map with GPS, which only requires an accuracy on a scale of a few meters, the localization of a self-driving car has a much higher requirement of accuracy relative to the map, usually on the order of centimeters and a few tenths of degrees.

The value of localizing to a HD map might not be immediately obvious, given the rapid progress in live perception. However, when localized to a HD map, the car can make decisions based on information beyond its current field of view.

For example, the map can signal to the car that the current lane will end some ways down the road. The car can then exit the lane at any point it has the space, merging into the adjacent lane. This avoids a potentially dangerous last-second merge.

Localizing to a HD map also allows a self-driving car to contextualize the behaviour of other nearby vehicles within the map. At an intersection for example, it can recognize that an oncoming car is in a left turn lane and will not proceed straight, making it safe to perform an unprotected left turn.

A Mass-Market Solution

Self-driving cars often use high cost inertial and GNSS sensors, plus lidar, to achieve accurate localization. When these expensive, precise sensors are used, localizing the car within the map is fairly uncomplicated, due to the accuracy of the priors and the rich amount of information provided by lidar data. A number of innovations are required to enable localization with mass market sensors such as cameras and low cost inertial, GNSS, and CAN sensors.

To accommodate the much less rich or even noisy data from mass market sensors, DRIVE Localization considers many potential feature correspondences. Candidate poses are scored by overlaying a HD map of the environment with detections from the camera data. The module then evaluates thousands of candidate poses in parallel, scoring the matching between features in the map and one frame of visual data.

The optimal pose for an individual camera frame can be ambiguous if the feature detection is noisy. The module therefore additionally relies on temporal cross-checking of poses. A filter is adopted to work on top of these sampled and cross-checked poses.

The computational needs of this algorithm are very demanding, however the design is perfectly tailored to the NVIDIA Xavier system-on-a-chip and GPU parallel processing, which the NVIDIA DRIVE AGX platform delivers with high efficiency. The robustness of our algorithm enables it to work in challenging environments, such as nighttime, rainy and foggy days, and GNSS-denied areas like a tunnel, even with a single camera.

Redundancy and Diversity

DRIVE Localization can independently be used with one or more cameras, radars or lidars. Each sensor modality by itself provides an accurate output that can be used directly. After this, the localization from these individual outputs can be fused to provide a single even more reliable output.

The capability of working with a diversity of sensors provides extra safety by checking the consistency among the different localization outputs. For example, if the calibration of one sensor goes off, or the functionality of a sensor is heavily affected by harsh weather conditions such as rain or snow, the localization results from these sensors will be noticeably different from others.

DRIVE Localization can detect this kind of abnormality and generate a fusion result from consistent sensor outputs only. By leveraging this type of redundancy, autonomous vehicles can achieve higher levels of safety.

We’ve also worked with mapping companies around the world to localize to HD maps on global roads, enabling safe autonomous driving in a wide variety of locations.

DRIVE Localization is available to developers in the NVIDIA DRIVE Software 10.0 release. In this release, we have opened up our camera-based localization API to help enable this capability on mass-market consumer vehicles. Localization with radar and lidar sensors as well as localization fusion will be available in future releases.