By: Xiaolin Lin, Yilin Yang

Editor’s note: This is the latest post in our NVIDIA DRIVE Labs series, which takes an engineering-focused look at individual autonomous vehicle challenges and how NVIDIA DRIVE addresses them. Catch up on all of our automotive posts, here.

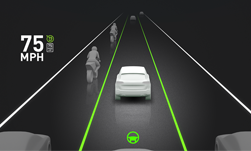

Lane and road edge detection is critical for self-driving car development — lane detection powers systems like lane-departure warning, that help keep human drivers from veering off lane. In addition to detecting lane line information, autonomous vehicles also need to detect other road markings — such as arrows or STOP text — as well as vertical landmarks that help accurately localize the car to a high-definition map.

In this week’s DRIVE Labs, we present the evolution of LaneNet DNN, which delivers high-precision, stable detection of painted lane lines on the road, into our high-precision MapNet DNN. This evolution includes an increase in detection classes to also cover road markings and vertical landmarks (e.g. poles) in addition to lane line detection. It also includes an increase in processing efficiency via end-to-end detection that provides faster in-car inference.

The MapNet DNN model that is available in the NVIDIA DRIVE Software 10.0 release is capable of detecting painted lane line markings (solid/dash lines, intersection entry/exit lines, road edges), painted road markings (for example, arrows, STOP text, and high occupancy vehicle lane markings), as well as vertical poles (for example, road sign and light poles).

To perform high-precision road markings and vertical landmark’s detection, MapNet DNN leverages underlying ground truth data-encoding technology from its predecessor, high-precision LaneNet. This encoding, which prevents high-resolution visual information from being lost during convolutional DNN processing, is both direction and orientation agnostic. In addition to creating sufficient redundancy to preserve rich lane line information, it can readily be extended to preserve information of arbitrarily shaped on-road markings, as well as landmarks such as poles (which can be thought of as “vertical lane lines” in this example).

We have also observed that high-precision MapNet is capable of delivering accurate shape detection of road markings even in the presence of partially missing paint marks, as shown in Figure 1 below. In cases with co-located solid and dashed lane line markings for the same lane, MapNet intentionally treats the lane line as solid in support of safe driving.

MapNet also detects road edges, which is particularly useful when clear painted lane markings do not exist, and it consistently detects transitions from solid to dashed lane line markings.

We’ve observed stable detection of lane lines and road edges even in the presence of visual challenges, including road cracks, tar stains, and harsh shadows cast by trees or vertical landmarks. MapNet moreover detects road text markings in different languages.

The latest MapNet DNN model currently in development is trained for end-to-end detection of road markings and landmarks, greatly reducing the complexity of post-processing raw DNN results into continuous geometric outputs. Fast in-car inference is crucial since it provides low latency perception inputs into longitudinal and lateral planning and control functions.

Moreover, high-precision road markings and landmark detection results provided by MapNet can be used as inputs into mapping and localization functions of autonomous vehicles. The ability to detect vertical landmarks, such as poles, is also particularly beneficial to achieving accurate longitudinal localization results.

For more information, visit our page on the NVIDIA Developer Zone.