Autonomous vehicles rely on cameras for a visual representation of the world around them. To safely drive without a human, these vehicles must be able to process image data from cameras quickly and accurately.

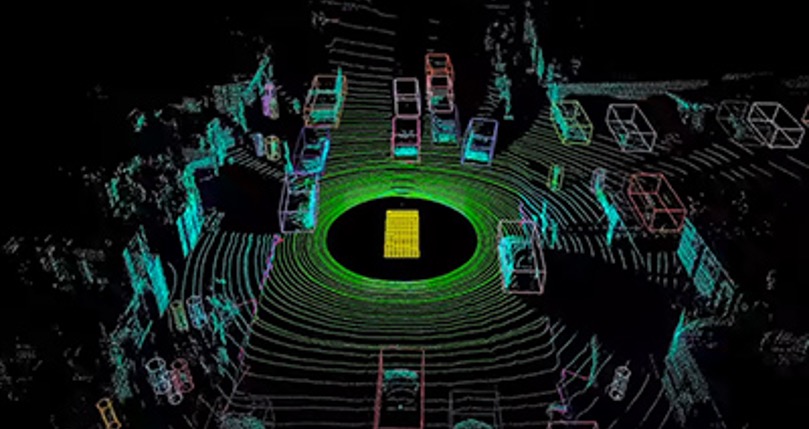

The NVIDIA DriveWorks SDK provides all the middleware developers need to efficiently build autonomous vehicle software, including reference applications, tools and a comprehensive library of modules that are optimized for the NVIDIA DRIVE AGX platform. DriveWorks contains a sensor abstraction layer (SAL) to easily bring up sensors, data recorder to capture sensor feeds in real-time, calibration software and accelerated modules for radar and lidar point clouds.

In an upcoming webinar, we’ll detail how DriveWorks contains an efficient and modular library of functions on which to develop a comprehensive image processing pipeline. With robust image data processing capabilities in place, developers can have a strong foundation for higher level autonomous vehicle software development.

Image processing pipelines take in raw camera data and, using a variety of algorithms, transform it into usable information for the perception algorithms. While there are many open source computer vision libraries, there is only one that provides a streamlined path to autonomous vehicle production.

Faster Image Processing

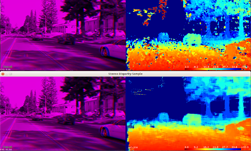

The DriveWorks SAL enables a variety of sensors and makes it possible to extend sensor support via plugins. The image processing modules work with the SAL to transform the raw camera data from a wide range of sensors, including fisheye and wide and narrow lenses.

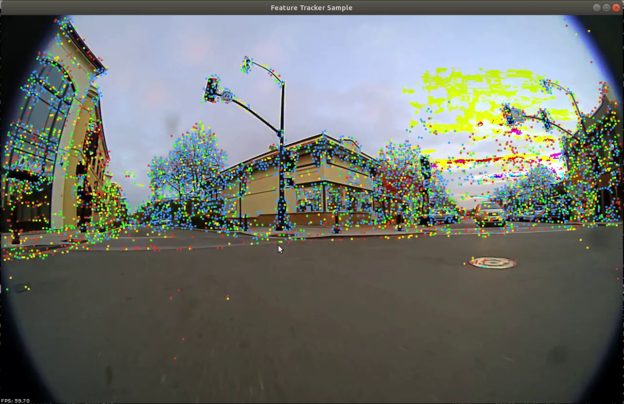

For faster image processing, DriveWorks provides a wide array of modules to build a pipeline. These modules can be used to build computer vision applications and pre/post-processing steps for deep learning algorithms, performing rectification, stereo disparity, template tracking, feature tracking and more. Sample code that uses these features is provided in DriveWorks and runs right out of the box.

Once the image data is processed, the diverse collection of deep neural networks that make up the vehicle’s perception layer can use the outcome to perform their duties in real-time. The processed images can also enable key functions such as calibration, advanced perception, and mapping and localization. DriveWorks’ design makes it possible to stack these modules together to quickly create complex image processing pipelines.

The DriveWorks image processing pipeline doesn’t just transform camera data. The SDK is designed with abstraction in mind and therefore streamlines the development process no matter what underlying hardware, image format or algorithms are used.

Hardware Acceleration

With DRIVE AGX optimization, DriveWorks can take advantage of high-performance compute while maintaining power efficiency.

At the core of DRIVE AGX is the Xavier system-on-a-chip, the world’s first processor for autonomous driving. Xavier contains numerous hardware accelerators — including a programmable vision accelerator and a GPU — specialized for autonomous driving.

These engines accelerate the image processing algorithms, which can be spread across the different engines to enable greater functionality while staying within the computational budget. By offering these capabilities, the DriveWorks SDK provides a seamless path to build a fully integrated production-level image processing pipeline.

To learn more about developing a camera pipeline on NVIDIA DriveWorks, register for the webinar and download the DriveWorks SDK here.