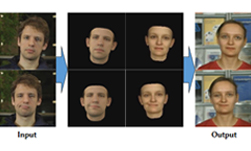

Researchers recently developed the first deep learning based system that can transfer the full 3D head position, facial expression and eye gaze from a source actor to a target actor.

“Synthesizing and editing video portraits, i.e., videos framed to show a person’s head and upper body, is an important problem in computer graphics, with applications in video editing and movie post-production, visual effects, visual dubbing, virtual reality, and telepresence, among others,” the researchers explained in their research paper.

Using NVIDIA TITAN Xp GPUs the team trained their generative neural network for ten hours on public domain clips.

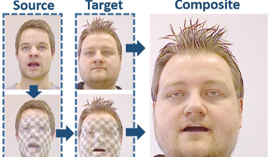

“Our approach enables a source actor to take full control of the rigid head pose, facial expressions and eye motion of the target actor; even face identity can be modified to some extent,” the team explained. “All of these dimensions can be manipulated together or independently. Full target frames, including the entire head and hair, but also a realistic upper body and scene background complying with the modified head, are automatically synthesized.”

The work builds on the Face2Face work previously featured at the GPU Technology Conference. The system could potentially be used in applications such as face reenactment, visual dubbing for foreign language movies and movie post-production.

When compared to other methods, their current approach performs distinguishably well, the researchers said. “We have shown through experiments and a user study that our method outperforms prior work in quality and expands over their possibilities. It thus opens up a new level of capabilities in many applications, like video reenactment for virtual reality and telepresence, interactive video editing, and visual dubbing.”

The work included researchers from the Max Planck Institute for Informatics, Technicolor, the Technical University of Munich, University of Bath, and Stanford University.

The research was published on ArXiv this week.

Read more >

Breakthrough AI Technique Could Revolutionize the Animation Industry

Jun 01, 2018

Discuss (0)

Related resources

- GTC session: How Generative AI Is Transforming the Entertainment Industry (Presented by CoreWeave)

- GTC session: Revolutionizing Media & Entertainment with Next-Gen AI Startups

- GTC session: Generative AI Theater: Generative AI Can Take You Anywhere

- SDK: NVIDIA Tokkio

- Webinar: Building Generative AI Applications for Enterprise Demands

- Webinar: What AI Teams Need to Know About Generative AI