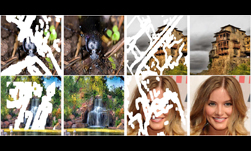

Researchers from Waseda University in Japan developed a deep learning-based method that removes unwanted objects from images and can complete images by filling-in missing regions.

Image completion is a challenging problem because it requires a high-level recognition of scenes.

“To train this image completion network to be consistent, we use global and local context discriminators that are trained to distinguish real images from completed ones,” mentioned in the researcher’s paper. “The global discriminator looks at the entire image to assess if it is coherent as a whole, while the local discriminator looks only at a small area centered at the completed region to ensure the local consistency of the generated patches. The image completion network is then trained to fool the both context discriminator networks, which requires it to generate images that are indistinguishable from real ones with regard to overall consistency as well as in details.”

Using Tesla K80 GPUs and the cuDNN-accelerated Torch7 deep learning framework, they trained their model on over 8 million scene images from the Places2 dataset. Once trained, their realistic image completion method is able to fill-in missing regions of a 1024 x 1024 image in under a second with a single TITAN X GPU – a nearly 15x speedup over their CPU experiments.

The method also works to complete images of faces – in a user study, 77% of the generated images by their approach were thought to be real.

Read more >