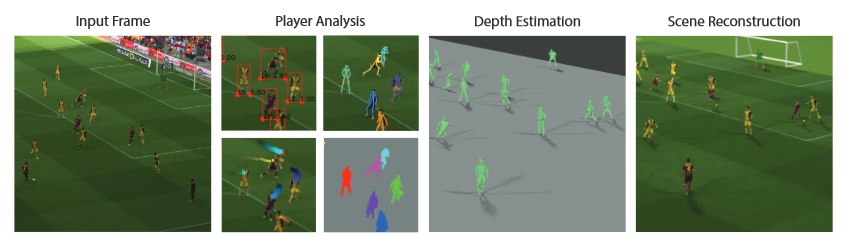

With the FIFA World Cup kicking off in just a few days – do you ever wonder what it would be like to have Cristiano Ronaldo, Lionel Messi or Neymar play a match on your kitchen table? Researchers from the University of Washington, Facebook, and Google developed the first end-to-end deep learning-based system that can transform a standard YouTube video of a soccer game into a moving 3D hologram.

“There are numerous challenges in monocular reconstruction of a soccer game. We must estimate the camera pose relative to the field, detect and track each of the players, re-construct their body shapes and poses, and render the combined reconstruction,” the researchers wrote in their research paper.

The results are striking, and the games can be watched with a 3D viewer or through an AR device anywhere in the world.

Using NVIDIA GeForce GTX 1080 GPUs and NVIDIA TITAN Xp GPUs with the cuDNN-accelerated PyTorch deep learning framework, the team trained their convolutional neural network on hours of 3D player data extracted from FIFA soccer video games.

Based on this video game data, the neural network is able to reconstruct per-player depth maps on the playing field, which they can render in a 3D viewer or on an AR device.

“It turns out that while playing Electronic Arts FIFA games and intercepting the calls between the game engine and the GPU, it is possible to extract depth maps from video game frames. In particular, we use RenderDoc to intercept the calls between the game engine and the GPU,” the team stated. “FIFA, similar to most games, uses deferred shading during gameplay. Having access to the GPU calls enables capture of the depth and color buffers per frame. Once depth and color are captured for a given frame, we process it to extract the players.”

To validate the system, the team tested their method on ten high-resolution professional soccer games found on YouTube. It’s worth noting the system was only trained on synthetic video game footage. However, in a real-world scenario, the system delivered championship worthy results.

The researchers concede their system isn’t perfect. One of their next projects will focus on training the system to detect the ball better, as well as developing a system that can be viewed from any angle.

The research is set to debut at the at the annual Computer Vision and Pattern Recognition (CVPR) conference June 18 – 22 in Salt Lake City, Utah.

Read more>

AI Transforms Recorded Soccer Games Into 3D Holograms

Jun 06, 2018

Discuss (0)

Related resources

- GTC session: Transforming 2D Imagery into 3D Geospatial Tiles With Neural Radiance Fields

- GTC session: Beyond the Pixels: Elevating Sports Analysis via AI Explainability in Semantic Segmentation

- GTC session: Revolutionizing Vision AI: From 2D to 3D Worlds

- NGC Containers: MATLAB

- SDK: Ansel

- SDK: Highlights