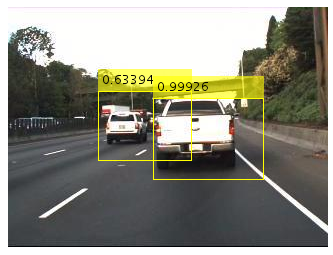

To help autonomous vehicles and robots potentially spot objects that lie just outside a system’s direct line-of-sight, Stanford, Princeton, Rice, and Southern Methodist universities researchers developed a deep learning-based system that can detect objects, including words and symbols, around corners.

“Compared to other approaches, our non-line-of-sight imaging system provides uniquely high resolutions and imaging speeds,” said Stanford University’s Chris Metzler, on the Rice University post, Cameras see around corners in real time with deep learning. “These attributes enable applications that wouldn’t otherwise be possible,” he added.

To achieve this, the system relies on a laser that can capture detailed images of objects around corners in real time. Specifically, a light from a high-speed laser is beamed onto a wall, the light from the hidden area bounces back to the wall, and that light is reflected to a camera. The system then measures the exact amount of time it takes to reflect light to the camera, or time-of-flight. With that information, the system can reconstruct an image of the hidden area.

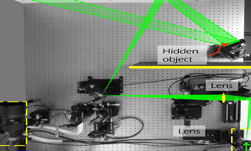

This photograph illustrates the laboratory setup for the “deep-inverse correlography” imaging system developed by the researchers. The innovative system captures high-resolution images of objects hidden around a corner using deep learning in combination with hardware that includes a commercially available CMOS camera sensor and a powerful, but otherwise standard, laser. (Image source: Prasanna Rangarajan/SMU).

On the backend, the team trained a convolutional neural network using a dataset of sparse images, with a U-net architecture. The U-net-based model serves a convolutional autoencoder with a large number of skip connections between the network’s layers.

The network was trained and implemented using NVIDIA TITAN RTX GPUs, with the cuDNN-accelerated PyTorch deep learning framework.

“Compared to other approaches for non-line-of-sight imaging, our deep learning algorithm is far more robust to noise and thus can operate with much shorter exposure times,” said study co-author Prasanna Rangarajan of SMU. “By accurately characterizing the noise, we were able to synthesize data to train the algorithm to solve the reconstruction problem using deep learning without having to capture costly experimental training data.”

After training, the team evaluated their approach on experimental non-line-of-sight imaging data and successfully reconstructed the shape of small hidden objects from a distance of one meter away.

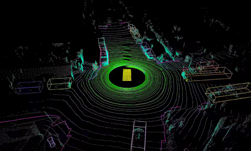

The work is a proof of concept but the research team hopes to continue developing the system for use in a variety of applications, including autonomous vehicles.

A paper, Deep-inverse correlography: towards real-time high-resolution non-line-of-sight imaging, was recently published in the Journal Optica. The code will be published to GitHub soon.