Sixty to seventy million people in the U.S. suffer from gastrointestinal diseases and the best way to clinically diagnose the exact problem is to perform an abdominal ultrasound. However, the process is labor intensive and sometimes inefficient. To help solve the issue, researchers from Siemens and Vanderbilt University developed a deep learning-based system that can automatically interpret abdominal ultrasound images and detect organs and abnormalities.

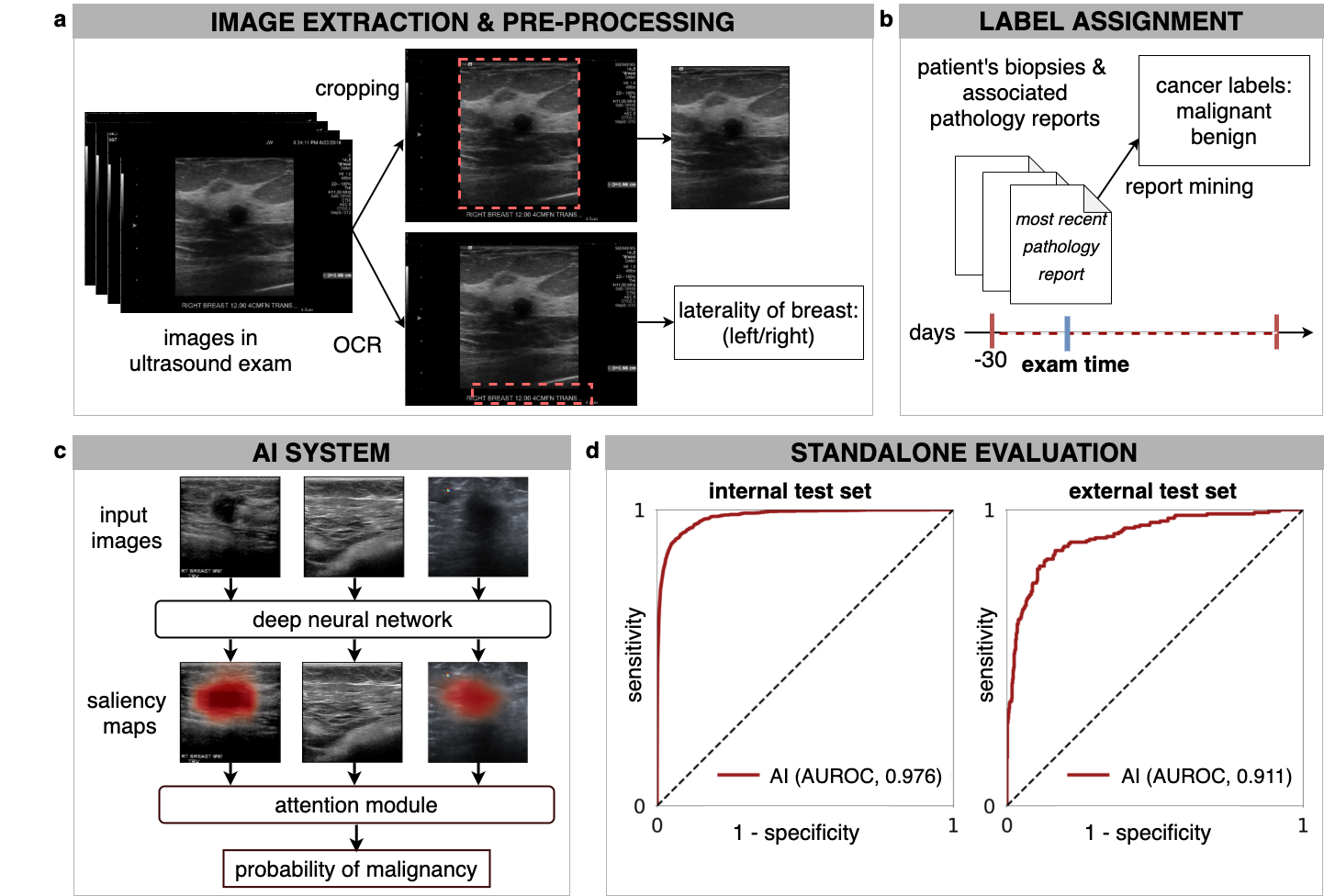

The researchers say this is the first deep learning system that uses an integrated system to classify abdominal ultrasounds automatically.

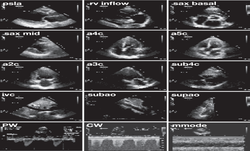

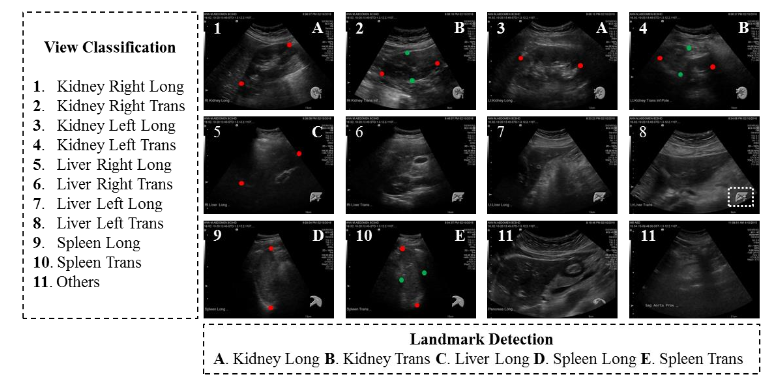

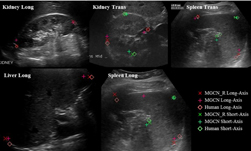

“Automatic view classification and landmark detection of the abdominal organs on ultrasound images can be instrumental to streamline the examination workflow,” the researchers wrote in their research paper. “We pursue a highly integrated multi-task learning framework to perform simultaneous view classification and landmark detection automatically to increase the efficiency of abdominal ultrasound examination workflow.”

Using NVIDIA TITAN X GPUs and the cuDNN-accelerated PyTorch deep learning framework the team trained their system on over 187,000 images from 706 patients.

“While convolutional neural networks (CNN) have demonstrated more promising outcomes on ultrasound image analytics than traditional machine learning approaches, it becomes impractical to deploy multiple networks (one for each task) due to the limited computational and memory resources on most existing ultrasound scanners,” the team said. “To overcome such limits, we propose a multitask learning framework to handle all the tasks by a single network.”

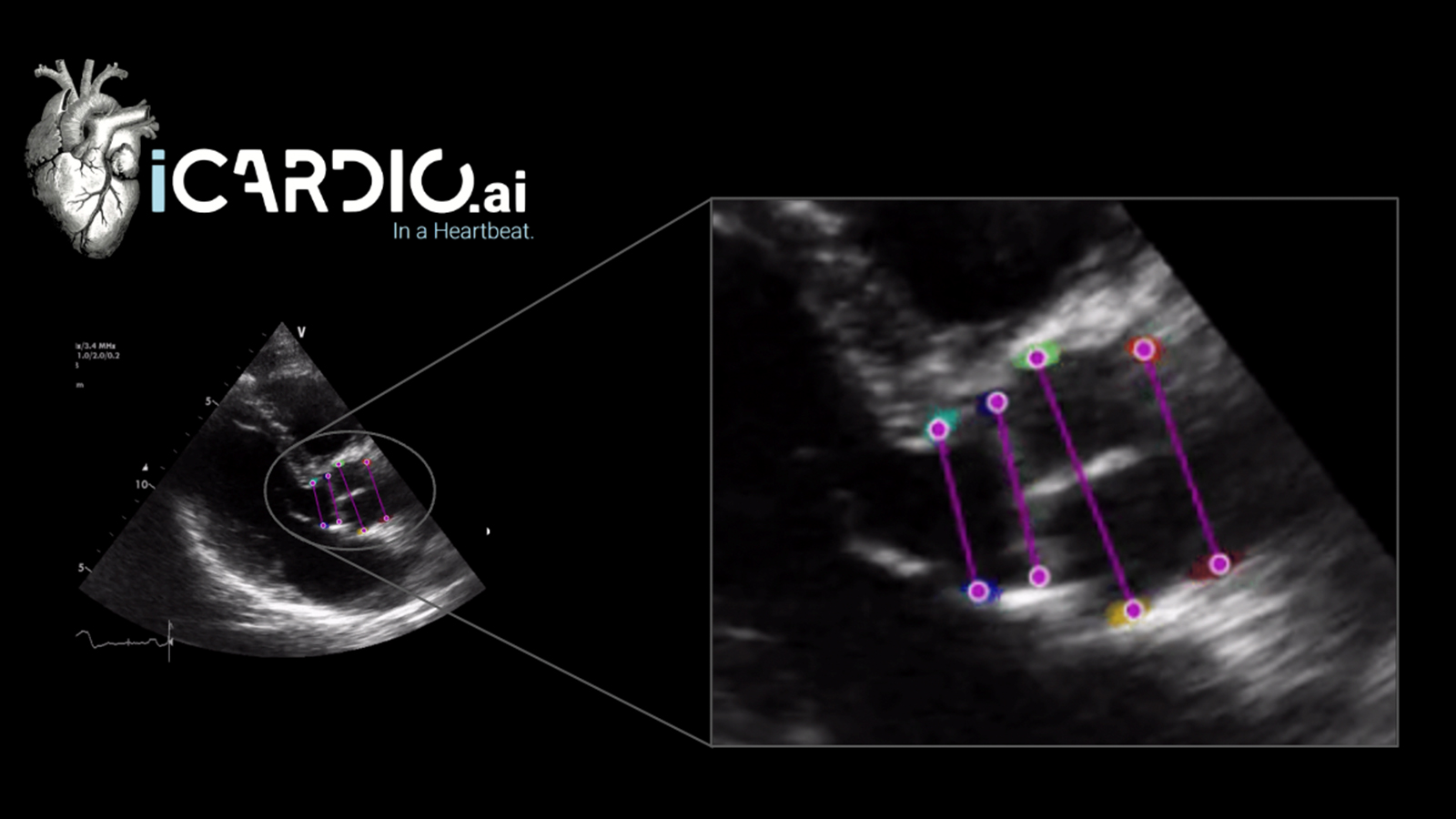

The neural network can perform view classification and landmark detection simultaneously.

According to the researchers, the method outperforms the approaches that address each task individually.

The paper was published on ArXiv on Monday.

Read more >

AI System Automatically Examines Abdominal Ultrasounds

May 30, 2018

Discuss (0)

Related resources

- GTC session: Creating AI-Powered Hardware Solutions for Medical Imaging Applications

- GTC session: How to Create Real-Time AI Medical Devices with Code

- GTC session: How Artificial Intelligence is Powering the Future of Biomedicine

- NGC Containers: BodyMarker/PhenoBody

- SDK: MONAI Cloud API

- SDK: MONAI Deploy App SDK