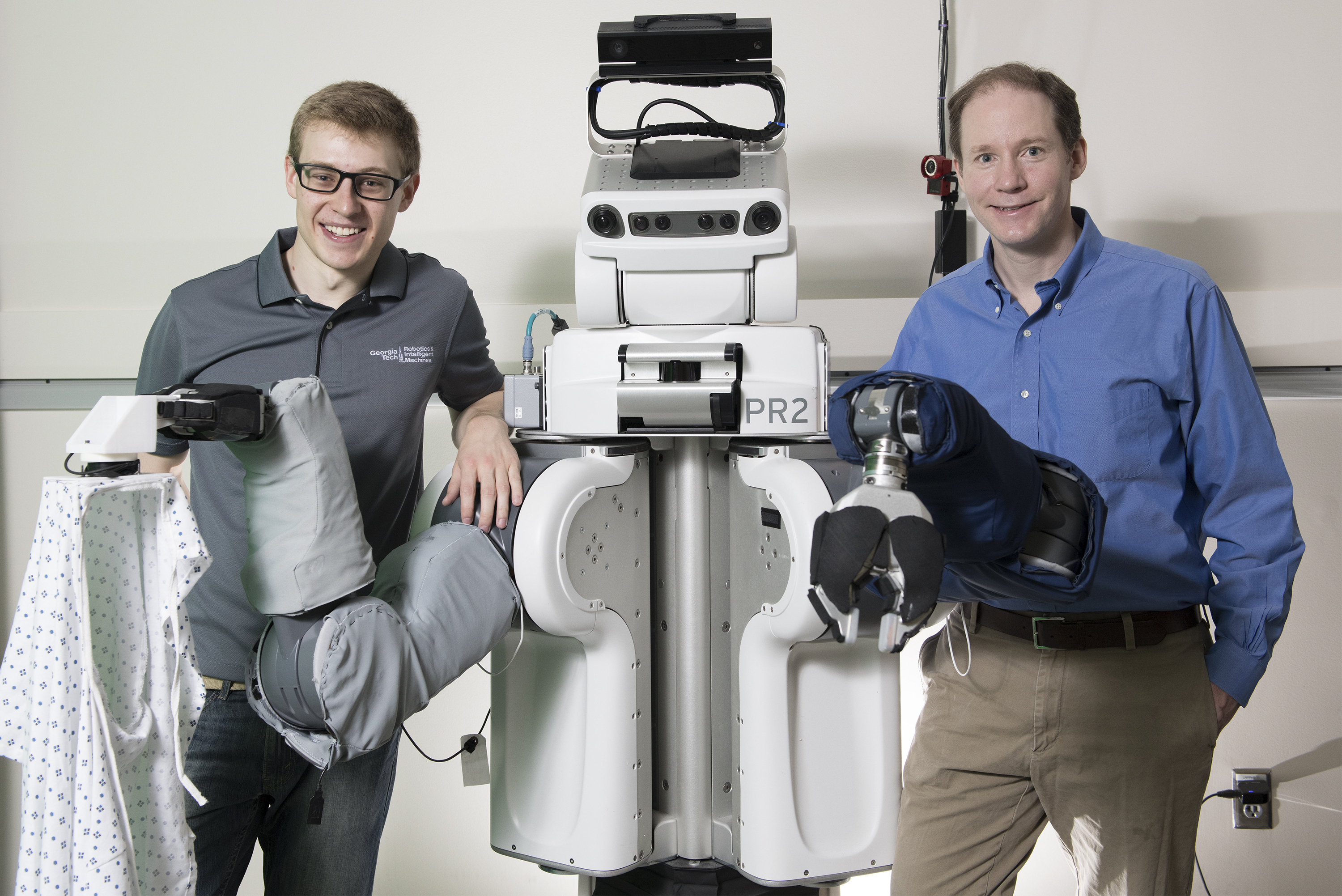

Every day, more than 1 million people in the United States require physical assistance to get dressed, whether because of injury, permanent disability, age, or other debilitating factors. To alleviate the problem, researchers from Georgia Tech built a deep learning-equipped robot that can help people get dressed.

“What the robot is trying to do is to take the person’s perspective of what a person is feeling during assistance,” said Zachary Erickson, a robotics Ph.D. Student at Georgia Tech. “When the robot is doing this, it’s using what it feels on its fingertips or its gripper and saying, what do I think a person is feeling while being dressed?”

The robot, named PR2, was trained using NVIDIA Tesla V100 GPUs on the Amazon Web Services cloud with the cuDNN-accelerated Keras and TensorFlow deep learning frameworks. The system analyzed nearly 11,000 simulated examples of a robot putting on a gown onto a human arm.

“From these examples, the robot learned to estimate the forces applied to the human. In a sense, the simulations allowed the robot to learn what it feels like to be the human receiving assistance.”

The researchers say the robot learned to predict the consequences of moving the gown in different ways. The robot uses this data to select motions that comfortably dress the arm.

“When I go, and I help someone, I can draw on experience, those years of dressing myself. I have the sense of what it’s like, what to do, what not to do, but robots haven’t had the benefit of that experience,” said Charlie Kemp, Associate Professor of Biomedical Engineering at Georgia Tech said. “So through the simulation, the robot can get some of that same type of experience because it’s not only simulating the cloth, it’s simulating the person, and it has this window of what the person is feeling.”

The robot is currently putting the gown on one arm, and the process takes about 10 seconds. The team says fully dressing a person is something that is many steps away from this work.

Read more>

Related resources

- GTC session: Reward Fine-Tuning for Faster and More Accurate Unsupervised Object Discovery

- GTC session: Generative AI Theater: A Magical Humanoid Robot Fueled by AI and Inspired by Users

- GTC session: Using Omniverse to Generate First-Person Experiential Data for Humanoid Robots

- NGC Containers: MATLAB

- Webinar: Isaac Developer Meetup #2 - Build AI-Powered Robots with NVIDIA Isaac Replicator and NVIDIA TAO

- Webinar: How Telcos Transform Customer Experiences with Conversational AI