A deep learning startup working to automate the creation of digital effects in the motion picture and television industry recently announced they raised over $10 million in funding.

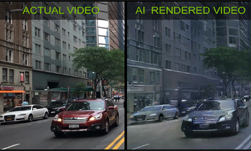

Arraiy, a Palo Alto, California-based startup, is building an AI system that can generate and manipulate images in real-time such as changing the type of car, color, and lighting features of a scene.

Arraiy’s real-time tracking solution was utilized by The Mill, showcasing the creative opportunities of “virtual production”

“High-end film production is incredibly complex, time-consuming and expensive to create,” the startup mentioned in a press release. “We are building an AI-based production workflow to simplify the production process and enable content creators to deliver top-tier visuals for significantly lower costs,” they said.

Using NVIDIA Tesla V100 GPUs on the Lambda Labs Deep Learning Cloud and the cuDNN-accelerated PyTorch deep learning framework, the startup trained their neural networks on a decade’s worth of rotoscoping and other visual effects.

Gary Bradski, Co-founder and Chief Technology Officer at Arraiy, told NVIDIA they also use GPUs for inference and some rendering. Once trained, the deep learning algorithm can rotoscope images without the help of a green screen, Bradski said.

“Machine learning is the next industrial revolution, vision and deep nets are really at a point of maturity, with the opportunity to provide a 10x on productivity. We’re solving the inverse graphics problem in the context of storytelling, and it’s just plain fun,” Bradski, said.

Beyond film, the company says their technology will also help creators in gaming, virtual reality, and sports broadcasting.

Read more>

Related resources

- GTC session: How Generative AI Is Transforming the Entertainment Industry (Presented by CoreWeave)

- GTC session: Revolutionizing Media & Entertainment with Next-Gen AI Startups

- GTC session: Generative AI Theater: Generative AI Can Take You Anywhere

- Webinar: Isaac Developer Meetup #2 - Build AI-Powered Robots with NVIDIA Isaac Replicator and NVIDIA TAO

- Webinar: Building Generative AI Applications for Enterprise Demands

- Webinar: Accelerate AV Development with DGX Cloud and NVIDIA AI Enterprise