Think of it as predictive text but for your piano. A new deep learning based system developed by Google researchers enables anyone to play the piano like a trained musician. The system dubbed Piano Genie automatically predicts the next most probable note in a song, enabling a non-musician to compose new and original music in real time.

“While most people have an innate sense of and appreciation for music, comparatively few are able to participate meaningfully in its creation,” the researchers stated in their paper.

Using NVIDIA Tesla P100 GPUs and the cuDNN-accelerated TensorFlow deep learning framework the team trained a recurrent neural network on 1400 classical musical performances by skilled pianists.

“A non-musician could operate a system which automatically generates complete songs at the push of a button, but this would remove any sense of ownership over the result. We seek to sidestep these obstacles by designing an intelligent interface which takes high-level specifications provided by a human and maps them to plausible musical performances,” the researchers explained in their paper.

The principal author of the paper, Chris Donahue, said that he was inspired by the game Guitar Hero, a game that simplifies how to play a musical instrument. Donahue and his team built a custom controller that shrunk a piano’s 88 notes into eight buttons.

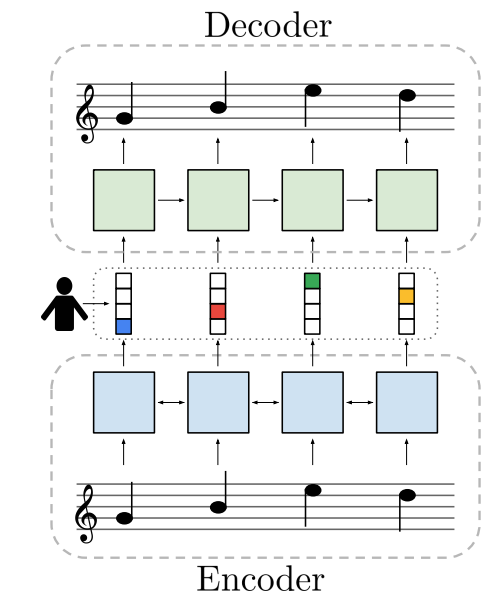

The team selected an unsupervised strategy for learning the mappings of the piano notes. Specifically, they used an autoencoder setup in which the encoder learns to map the piano’s 88-key sequences to 8-button sequences. The decoder learns to map the button sequences back to piano music.

“The system is trained end-to-end to minimize reconstruction error. At performance time, we replace the encoder’s output with a user’s button presses, evaluating the decoder in real time,” the researchers said. “We believe that the autoencoder framework is a promising approach for learning mappings between complex interfaces and simpler ones, and hope that this work encourages future investigation of this space,” Donahue and his team stated.

A paper describing the method was recently published on ArXiv and the training code is also publicly available on GitHub.

People can also try out the system via an online demo.

Read more>

AI-Powered Piano Allows Anyone to Compose Music by Pressing a Few Buttons

Oct 17, 2018

Discuss (0)

Related resources

- DLI course: Building a Brain in 10 Minutes

- GTC session: Generative AI Theater: AI Decoded - Generative AI Spotlight Art With RTX PCs and Workstations

- GTC session: The Next Level of GenAI with Azure OpenAI Service and Copilot (Presented by Microsoft)

- GTC session: AI-Driven Generative Music for Singing Voice Conversion and DAW-Less Operation with an 18,000-Song Karaoke Catalog

- NGC Containers: MATLAB

- NGC Containers: Autovox Hindi ASR