There are nearly 7,000 languages worldwide and around half are considered endangered. That means many of them are no longer taught in schools, not used in commerce or government, and often incompatible with computer keyboards.

To help preserve audio and textual evidence of one of these languages, researchers from the Rochester Institute of Technology developed a deep learning-based automatic speech recognition system to preserve the language of the Seneca Indian Nation.

“The motivation for this is personal. The first step in the preservation and revitalization of our language is documentation of it,” said Robert Jimerson (Seneca), a computing and information sciences doctoral student at the Rochester Institute of Technology and member of the research team.

Seneca is spoken fluently by fewer than 50 people. To help preserve it, Jimerson brought tribal elders and close friends together, all native speakers of Seneca, to record audio and textual documentation of this Native American language.

“No one has really tried this before, training an automated speech recognition model on something as resource-constrained as Seneca,”said Ray Ptucha, assistant professor of computer engineering at the Rochester Institute of Technology.

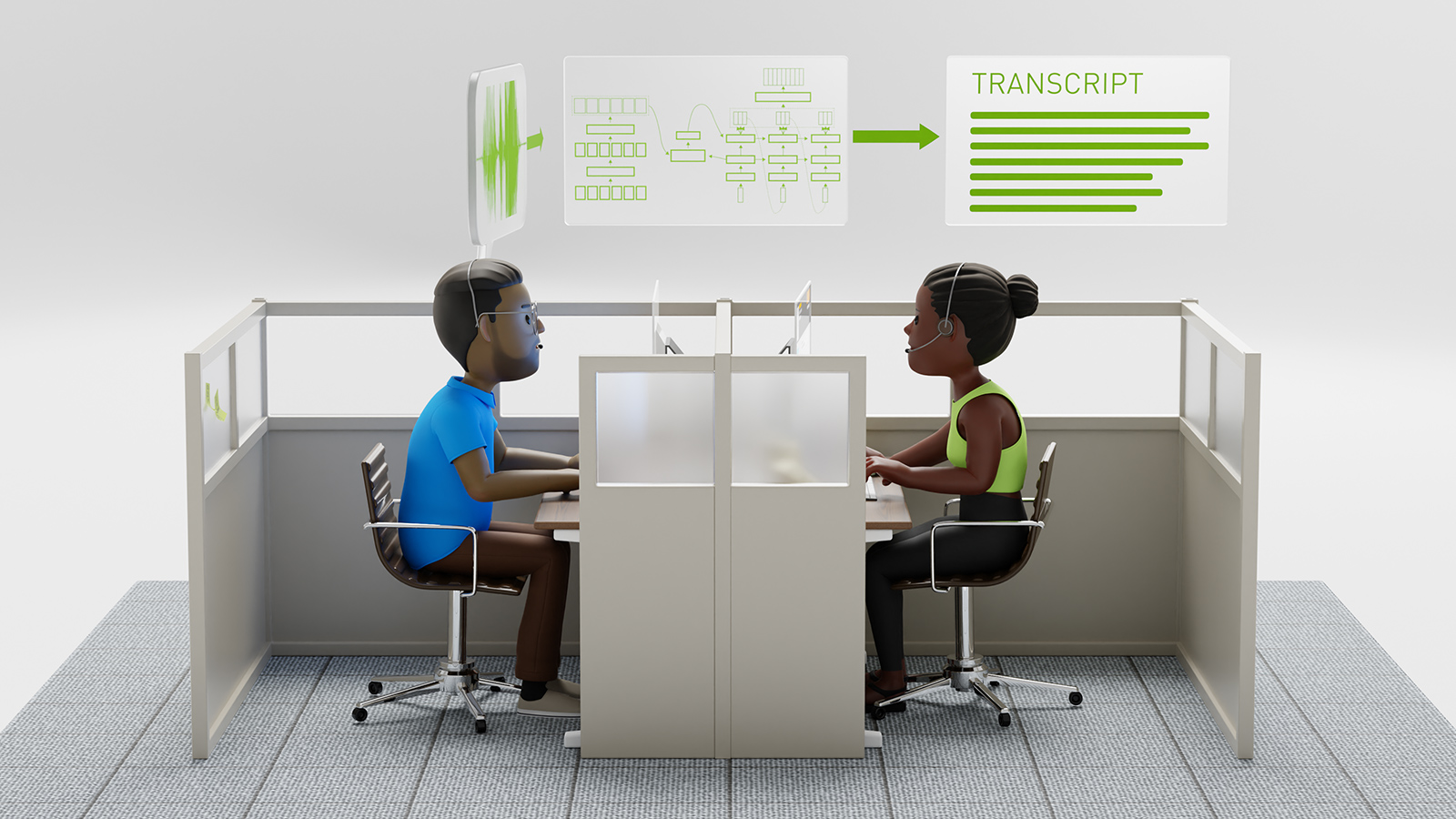

The team first used a prebuilt deep neural network (DNN) acoustic model trained on large quantities of English data and adapted that model to Seneca via transfer learning.

Using NVIDIA Tesla P100 GPUs and the cuDNN-accelerated TensorFlow deep learning framework, Jimerson and his colleagues trained the network on 155 minutes of audio, which included 13,000 words recorded and transcribed by several adult first-language Seneca speakers.

The team then created new synthetic training data by using three different augmentation techniques, which included noise addition, pitch augmentation, and speed augmentation.

“This is an exciting project because it brings together people from so many disciplines and backgrounds, from engineering and computer science to linguistics and language pedagogy,” said Emily Prud’hommeaux, assistant professor of computer science at Boston College and research faculty in RIT’s College of Liberal Arts.

Right now the team is focused on reducing the word error rate, which they say is due to the small training dataset. The synthetic data they developed reduces the word error rate but the model still needs some work, the team said.

“As the size of our Seneca training corpus increases in the course of our current language documentation project, we anticipate that the performance gap between the approaches will decrease,” the team stated in their paper.

Read more>

AI Helps Preserve the Endangered Seneca Language

Oct 18, 2018

Discuss (0)

Related resources

- GTC session: Generative AI Demystified

- GTC session: Human-Like AI Voices: Exploring the Evolution of Voice Technology

- GTC session: The Future of AI Chatbots: How Retrieval-Augmented Generation is Changing the Game

- Webinar: What AI Teams Need to Know About Generative AI

- Webinar: Harness the Power of Cloud-Ready AI Inference Solutions and Experience a Step-By-Step Demo of LLM Inference Deployment in the Cloud

- Webinar: How Telcos Transform Customer Experiences with Conversational AI