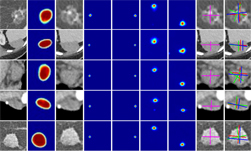

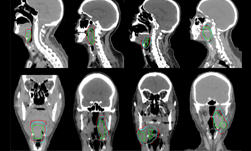

According to the Centers for Disease Control and Prevention, lung cancer is the leading cause of cancer death in the United States, accounting for 27% of deaths. To treat patients with lung cancer, doctors often use radiation therapy. However, the respiratory motion causes uncertainty in tumor location, leading to complications in radiation therapy techniques.

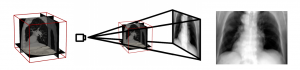

To improve the process, researchers at the University of Utah and the University of Maryland developed a deep learning-based technique that estimates organ movement and deformations during breathing and therefore delivers radiation as close as possible to the tumor in real-time.

This new method could help physicians minimize the undesired side effects of radiation to surrounding healthy tissue and vital organs.

“The patient-specific respiratory motion patterns can be identified through our framework in real-time,” the researchers said in their research paper. “With deep learning the combination of these motion patterns observed in real-time radiographs can be recovered to determine the shift in target position from the targeted baseline position. This method eliminates the need for invasive fiducial markers while still producing targeted radiation delivery during variable respiration patterns,” the researchers added.

Using an NVIDIA Tesla V100 GPU and the cuDNN-accelerated PyTorch deep learning framework, the team trained their network on a dataset of 40,401 images, which the researchers generated, to simulate the full-exhale process.

For inference, the researchers used an NVIDIA TITAN V GPU to classify over 1,000 images per second – a speed faster than real-time.

“The speed and accuracy attained by this framework is suitable for inclusion in a tumor position monitoring component of a conformal radiation therapy system,” the researchers stated.

AI Helps Physicians Deliver Precise Radiotherapy Faster Than Real Time

Apr 17, 2018

Discuss (0)

Related resources

- GTC session: Revolutionizing Healthcare through AI-Empowered Solutions and Medical Devices

- GTC session: How to Create Real-Time AI Medical Devices with Code

- GTC session: Creating AI-Powered Hardware Solutions for Medical Imaging Applications

- SDK: Clara Train

- SDK: MONAI Cloud API

- SDK: MONAI Deploy App SDK