In computer vision, creating an image of a long list of text is complicated. To help accelerate research in this field, a team from Tel Aviv University in Israel developed a deep learning-based system that can automatically generate pictures of a finished meal from a simple text-based recipe.

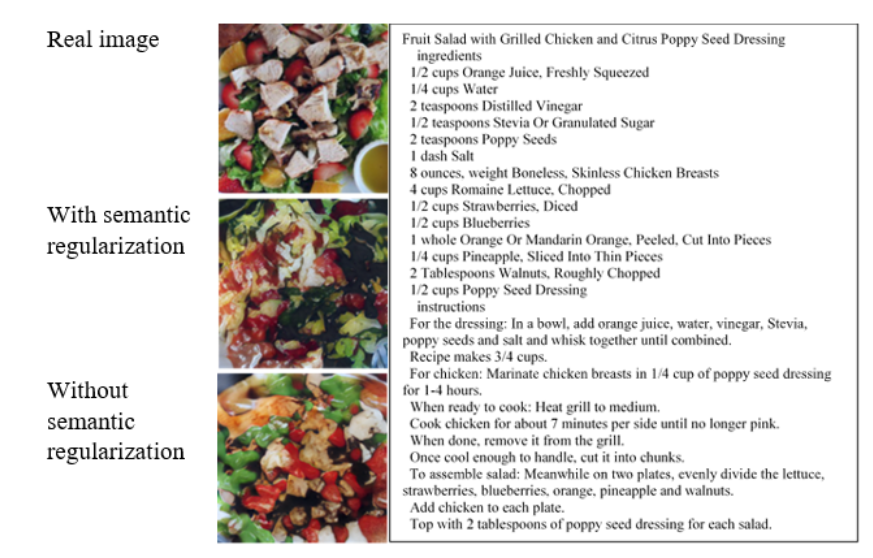

“We propose a novel task of synthesizing images from long text, that is related to the image but does not contain a visual description of it,” the researchers stated in their paper.

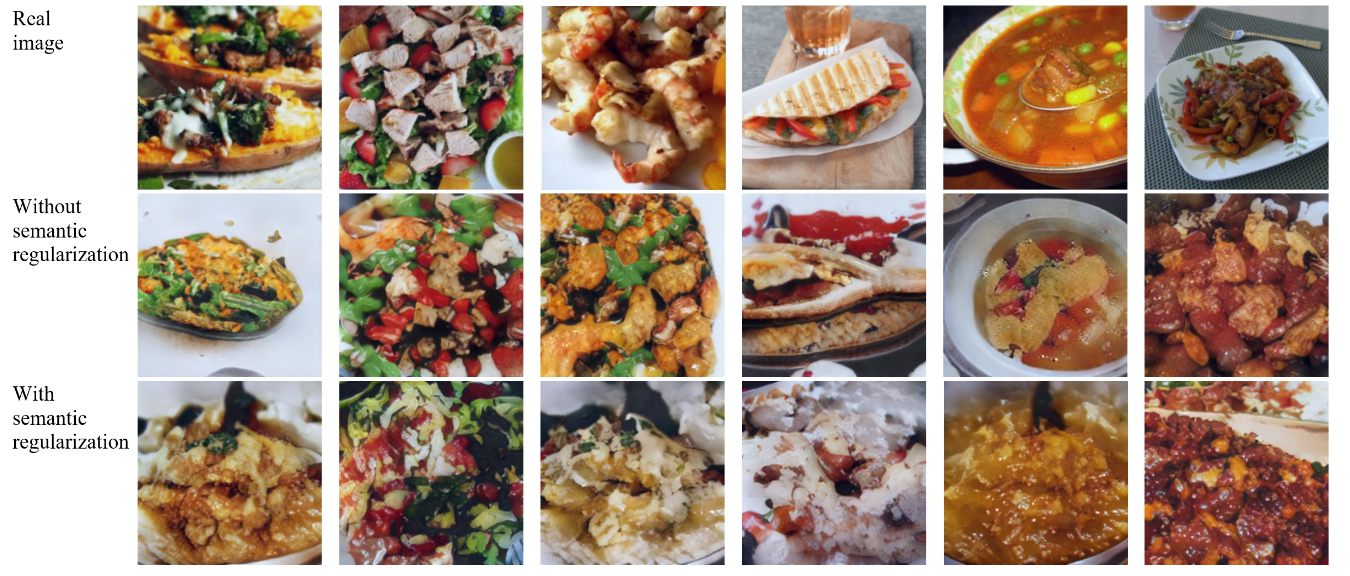

Using NVIDIA TITAN X GPUs, with the cuDNN-accelerated PyTorch deep learning framework, the team trained a conditional generative adversarial network on 52,000 written recipes and their corresponding images. Once trained, the system generated images of what the recipe might look like from a long list of text that did not describe the visual content.

“Our system takes a recipe as an input and generates, from scratch, an image that reflects the food that the system ‘believes’ this recipe describes,” said Ori Bar El, one of the co-authors of the paper. “The important aspect is that the system has no access to the title of the recipe — otherwise this task would have been pretty easy — and that the text of the recipe is both long and does not describe the visual content of the image directly. [This fact] makes this task very hard even for humans, and all the more so for computers.”

To evaluate the images of the two methods the system generated, the team enlisted the help of human critics to judge the most appealing photos on a scale of 1 to 5. It is worth mentioning that some of the real food images were given less than-or-equal rank in comparison to the generated images, the researchers said.

The system successfully generates porridge-like food images including pasta, rice, soups, and salad but struggles to generate images with a distinctive shape such as a hamburger, chicken or drinks.

The work was recently published on ArXiv.

Read more>

AI Generates Images of a Finished Meal Using Only a Written Recipe

Jan 14, 2019

Discuss (0)

Related resources

- GTC session: Generative AI Theater: The Great Prompt-Off - How Talent and AI Come Together to Unleash Imaginative Concepts

- GTC session: Tencent Text-to-Image Generative Model

- GTC session: Generative AI Theater: AI Decoded - Generative AI Spotlight Art With RTX PCs and Workstations

- SDK: MONAI Deploy App SDK

- Webinar: Isaac Developer Meetup #2 - Build AI-Powered Robots with NVIDIA Isaac Replicator and NVIDIA TAO

- Webinar: Building Generative AI Applications for Enterprise Demands