Autonomous robot navigation is a hard problem that is best tackled with deep learning frameworks running on powerful AI platforms. Students of the National Tsing Hua University in Taiwan presented just such an easy-to-implement, modular robot navigation framework on the Jetson AI platform and won the second prize in the recently concluded AI at the Edge Challenge.

The five-person team, who fondly call themselves Team Do You Want to Build A Snowman, used a single camera and deep learning methods for autonomous navigation. They benefited from using sim-to-real techniques to train their deep reinforcement learning (DRL) agent quickly and efficiently. Most important, their key contribution through this project is virtual guidance, a simple but effective way to communicate the navigation path to their DRL agent.

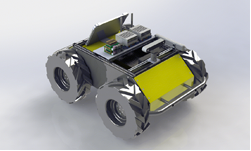

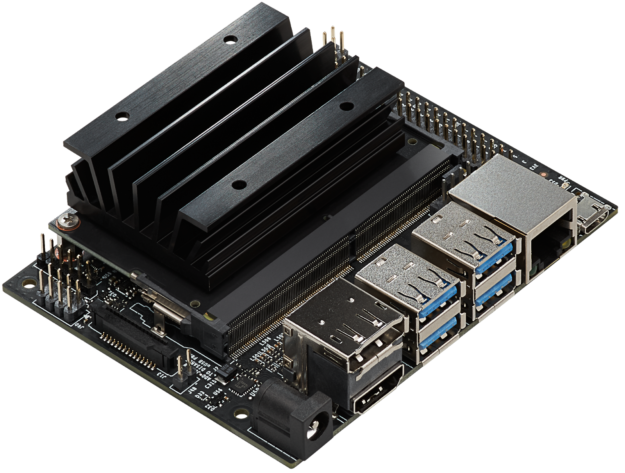

Their framework consists of four modules: perception, localization, planner, and control policy. The communication of the four modules, as well as the peripheral components (for example, the RGB camera and AGV), is carried out using the robot operating system (ROS). The team used an embedded cluster consisting of two NVIDIA Jetson Nano Developer Kits and two NVIDIA Jetson Xavier Developer Kit modules to implement the entire framework. The localization module is executed on Jetson Xavier kit for its larger memory and higher computational power. The planner module is executed on the Jetson Nano kit, which also serves as an ROS node for coordinating the embedded cluster.

Sim-to-real – An effective robot navigation framework- Demonstration video

“Using a single camera and a few edge-computing devices, we make autonomous navigation more realistic and affordable,“ say the researchers. “We believe that this project opens a new avenue for future research in vision-based autonomous navigation.”

For more details including schematics and code, read the in-depth tutorial on the Hackster.io page, Sim-to-Real: Virtual Guidance for Robot Navigation.