MIT researchers developed a deep learning system that can compile a list of ingredients and suggest similar recipes by looking at a photo of food.

“In computer vision, food is mostly neglected because we don’t have the large-scale datasets needed to make predictions,” says Yusuf Aytar, an MIT postdoc who co-wrote the paper about the system with MIT Professor Antonio Torralba. “But seemingly useless photos on social media can actually provide valuable insight into health habits and dietary preferences.”

Using TITAN X GPUs and the cuDNN-accelerated Torch deep learning framework, the team trained their neural network on a database of over 1 million recipes they found on the websites All Recipes and Food.com. They then used that data to train a neural network to find patterns and make connections between the food images and the corresponding ingredients and recipes.

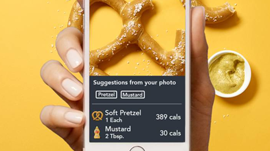

The trained system called Pic2Recipe could identify ingredients like flour, eggs, and butter, and then suggest several recipes that it determined to be similar to images from the database.

“This could potentially help people figure out what’s in their food when they don’t have explicit nutritional information,” says MIT CSAIL graduate student and lead author Nick Hynes. “For example, if you know what ingredients went into a dish but not the amount, you can take a photo, enter the ingredients, and run the model to find a similar recipe with known quantities, and then use that information to approximate your own meal.”

Read more >

AI App Suggests Recipes Based on Food Pictures

Jul 21, 2017

Discuss (0)

Related resources

- GTC session: Scaling Generative AI Features to Millions of Users Thanks to Inference Pipeline Optimizations

- GTC session: Generative AI Theater: The Great Prompt-Off - How Talent and AI Come Together to Unleash Imaginative Concepts

- GTC session: Cues-Net: Addressing Multi-Task Regression through Hybrid Feature Fusion Supervision

- NGC Containers: MATLAB

- NGC Containers: quickstart-rapidsai

- SDK: MONAI Deploy App SDK